up

Some checks failed

Docs CI / lint-and-preview (push) Has been cancelled

AOC Guard CI / aoc-guard (push) Has been cancelled

AOC Guard CI / aoc-verify (push) Has been cancelled

api-governance / spectral-lint (push) Has been cancelled

oas-ci / oas-validate (push) Has been cancelled

Policy Lint & Smoke / policy-lint (push) Has been cancelled

Policy Simulation / policy-simulate (push) Has been cancelled

SDK Publish & Sign / sdk-publish (push) Has been cancelled

Some checks failed

Docs CI / lint-and-preview (push) Has been cancelled

AOC Guard CI / aoc-guard (push) Has been cancelled

AOC Guard CI / aoc-verify (push) Has been cancelled

api-governance / spectral-lint (push) Has been cancelled

oas-ci / oas-validate (push) Has been cancelled

Policy Lint & Smoke / policy-lint (push) Has been cancelled

Policy Simulation / policy-simulate (push) Has been cancelled

SDK Publish & Sign / sdk-publish (push) Has been cancelled

This commit is contained in:

11

.claude/settings.local.json

Normal file

11

.claude/settings.local.json

Normal file

@@ -0,0 +1,11 @@

|

||||

{

|

||||

"permissions": {

|

||||

"allow": [

|

||||

"Bash(dotnet build:*)",

|

||||

"Bash(dotnet restore:*)",

|

||||

"Bash(chmod:*)"

|

||||

],

|

||||

"deny": [],

|

||||

"ask": []

|

||||

}

|

||||

}

|

||||

219

CLAUDE.md

Normal file

219

CLAUDE.md

Normal file

@@ -0,0 +1,219 @@

|

||||

# CLAUDE.md

|

||||

|

||||

This file provides guidance to Claude Code (claude.ai/code) when working with code in this repository.

|

||||

|

||||

## Project Overview

|

||||

|

||||

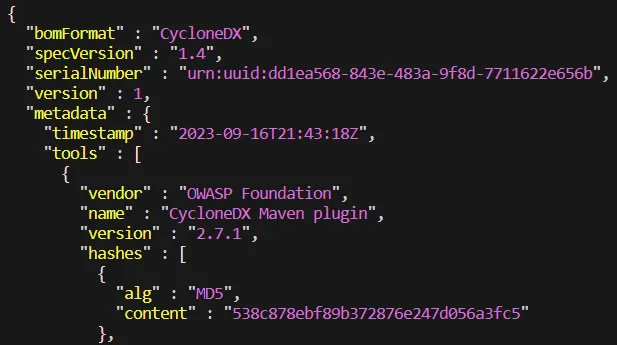

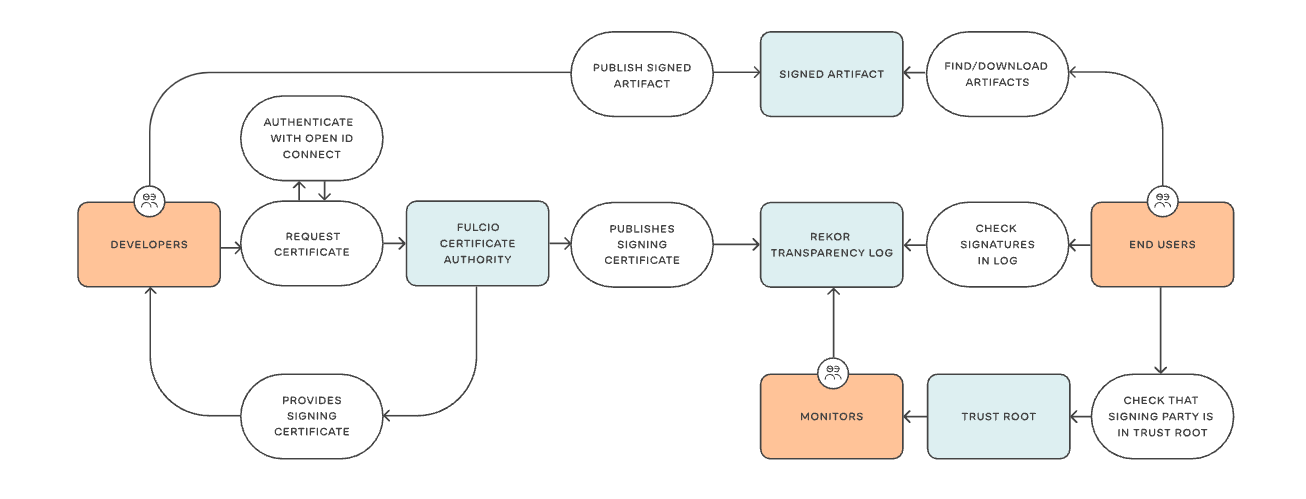

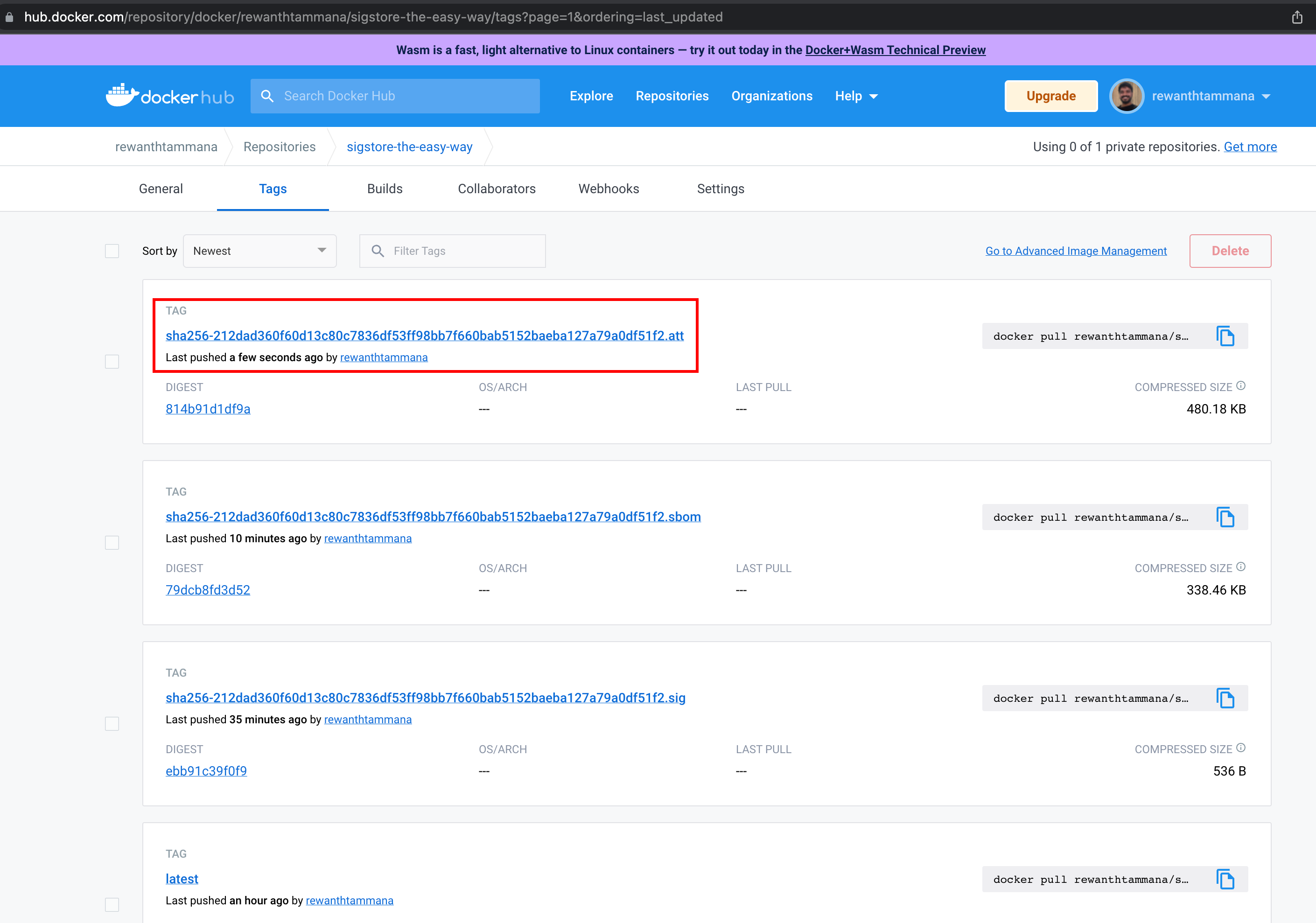

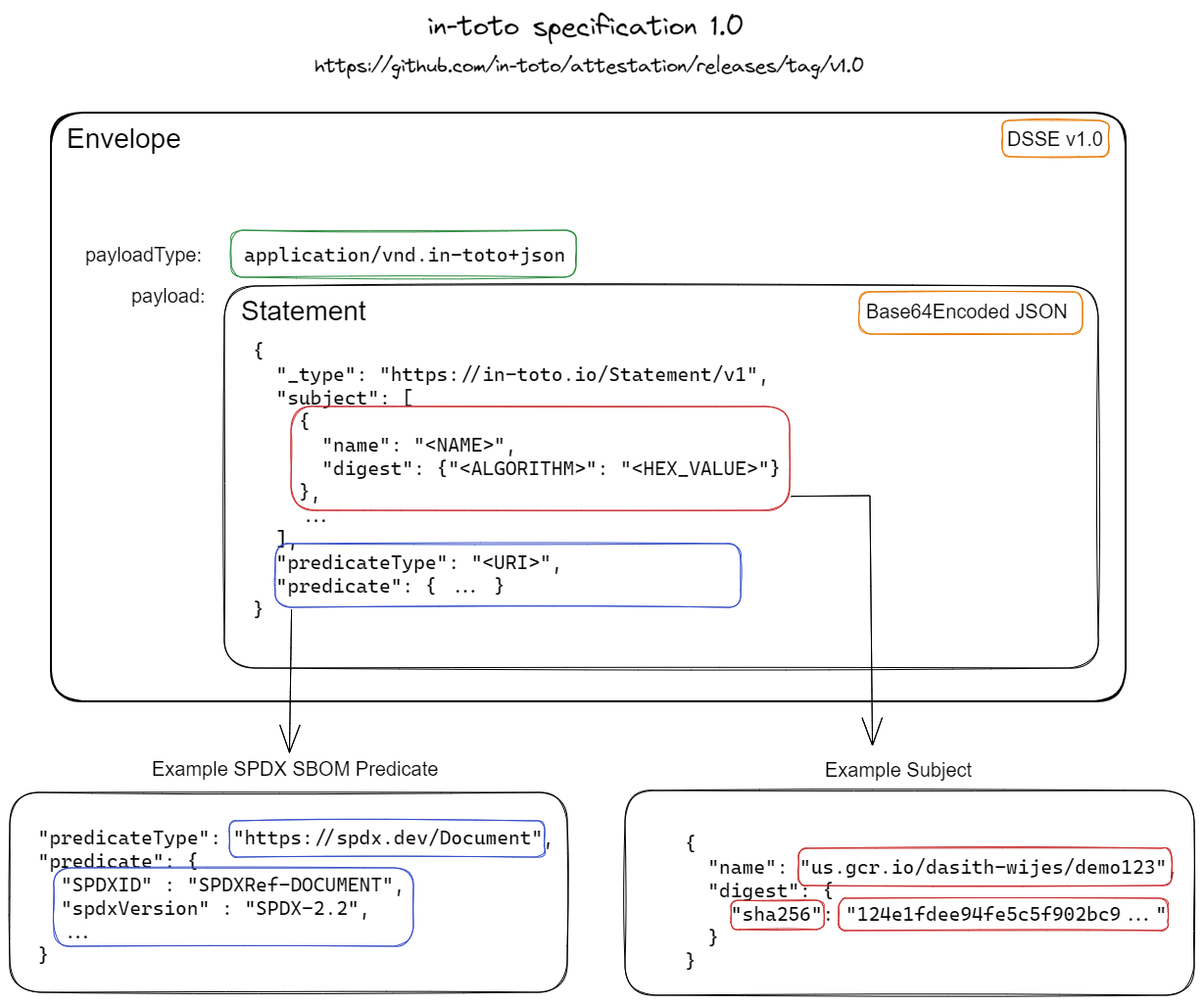

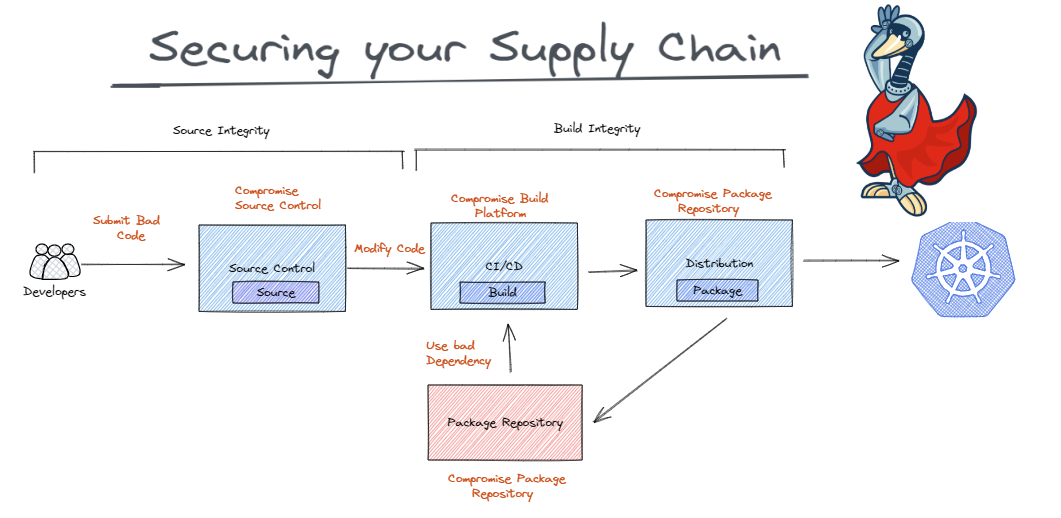

StellaOps is a self-hostable, sovereign container-security platform released under AGPL-3.0-or-later. It provides reproducible vulnerability scanning with VEX-first decisioning, SBOM generation (SPDX 3.0.1 and CycloneDX 1.6), in-toto/DSSE attestations, and optional Sigstore Rekor transparency. The platform is designed for offline/air-gapped operation with regional crypto support (eIDAS/FIPS/GOST/SM).

|

||||

|

||||

## Build Commands

|

||||

|

||||

```bash

|

||||

# Build the entire solution

|

||||

dotnet build src/StellaOps.sln

|

||||

|

||||

# Build a specific module (example: Concelier web service)

|

||||

dotnet build src/Concelier/StellaOps.Concelier.WebService/StellaOps.Concelier.WebService.csproj

|

||||

|

||||

# Run the Concelier web service

|

||||

dotnet run --project src/Concelier/StellaOps.Concelier.WebService

|

||||

|

||||

# Build CLI for current platform

|

||||

dotnet publish src/Cli/StellaOps.Cli/StellaOps.Cli.csproj --configuration Release

|

||||

|

||||

# Build CLI for specific runtime (linux-x64, linux-arm64, osx-x64, osx-arm64, win-x64)

|

||||

dotnet publish src/Cli/StellaOps.Cli/StellaOps.Cli.csproj --configuration Release --runtime linux-x64

|

||||

```

|

||||

|

||||

## Test Commands

|

||||

|

||||

```bash

|

||||

# Run all tests

|

||||

dotnet test src/StellaOps.sln

|

||||

|

||||

# Run tests for a specific project

|

||||

dotnet test src/Scanner/__Tests/StellaOps.Scanner.WebService.Tests/StellaOps.Scanner.WebService.Tests.csproj

|

||||

|

||||

# Run a single test by filter

|

||||

dotnet test --filter "FullyQualifiedName~TestMethodName"

|

||||

|

||||

# Run tests with verbosity

|

||||

dotnet test src/StellaOps.sln --verbosity normal

|

||||

```

|

||||

|

||||

**Note:** Tests use Mongo2Go which requires OpenSSL 1.1 on Linux. Run `scripts/enable-openssl11-shim.sh` before testing if needed.

|

||||

|

||||

## Linting and Validation

|

||||

|

||||

```bash

|

||||

# Lint OpenAPI specs

|

||||

npm run api:lint

|

||||

|

||||

# Validate attestation schemas

|

||||

npm run docs:attestor:validate

|

||||

|

||||

# Validate Helm chart

|

||||

helm lint deploy/helm/stellaops

|

||||

```

|

||||

|

||||

## Architecture

|

||||

|

||||

### Technology Stack

|

||||

- **Runtime:** .NET 10 (`net10.0`) with latest C# preview features

|

||||

- **Frontend:** Angular v17 (in `src/UI/StellaOps.UI`)

|

||||

- **Database:** MongoDB (driver version ≥ 3.0)

|

||||

- **Testing:** xUnit with Mongo2Go, Moq, Microsoft.AspNetCore.Mvc.Testing

|

||||

- **Observability:** Structured logging, OpenTelemetry traces

|

||||

- **NuGet:** Use the single curated feed and cache at `local-nugets/`

|

||||

|

||||

### Module Structure

|

||||

|

||||

The codebase follows a monorepo pattern with modules under `src/`:

|

||||

|

||||

| Module | Path | Purpose |

|

||||

|--------|------|---------|

|

||||

| Concelier | `src/Concelier/` | Vulnerability advisory ingestion and merge engine |

|

||||

| CLI | `src/Cli/` | Command-line interface for scanner distribution and job control |

|

||||

| Scanner | `src/Scanner/` | Container scanning with SBOM generation |

|

||||

| Authority | `src/Authority/` | Authentication and authorization |

|

||||

| Signer | `src/Signer/` | Cryptographic signing operations |

|

||||

| Attestor | `src/Attestor/` | in-toto/DSSE attestation generation |

|

||||

| Excititor | `src/Excititor/` | VEX document ingestion and export |

|

||||

| Policy | `src/Policy/` | OPA/Rego policy engine |

|

||||

| Scheduler | `src/Scheduler/` | Job scheduling and queue management |

|

||||

| Notify | `src/Notify/` | Notification delivery (Email, Slack, Teams) |

|

||||

| Zastava | `src/Zastava/` | Container registry webhook observer |

|

||||

|

||||

### Code Organization Patterns

|

||||

|

||||

- **Libraries:** `src/<Module>/__Libraries/StellaOps.<Module>.*`

|

||||

- **Tests:** `src/<Module>/__Tests/StellaOps.<Module>.*.Tests/`

|

||||

- **Plugins:** Follow naming `StellaOps.<Module>.Connector.*` or `StellaOps.<Module>.Plugin.*`

|

||||

- **Shared test infrastructure:** `StellaOps.Concelier.Testing` provides MongoDB fixtures

|

||||

|

||||

### Naming Conventions

|

||||

|

||||

- All modules are .NET 10 projects, except the UI (Angular)

|

||||

- Module projects: `StellaOps.<ModuleName>`

|

||||

- Libraries/plugins common to multiple modules: `StellaOps.<LibraryOrPlugin>`

|

||||

- Each project lives in its own folder

|

||||

|

||||

### Key Glossary

|

||||

|

||||

- **OVAL** — Vendor/distro security definition format; authoritative for OS packages

|

||||

- **NEVRA / EVR** — RPM and Debian version semantics for OS packages

|

||||

- **PURL / SemVer** — Coordinates and version semantics for OSS ecosystems

|

||||

- **KEV** — Known Exploited Vulnerabilities (flag only)

|

||||

|

||||

## Coding Rules

|

||||

|

||||

### Core Principles

|

||||

|

||||

1. **Determinism:** Outputs must be reproducible - stable ordering, UTC ISO-8601 timestamps, immutable NDJSON where applicable

|

||||

2. **Offline-first:** Remote host allowlist, strict schema validation, avoid hard-coded external dependencies unless explicitly allowed

|

||||

3. **Plugin architecture:** Concelier connectors, Authority plugins, Scanner analyzers are all plugin-based

|

||||

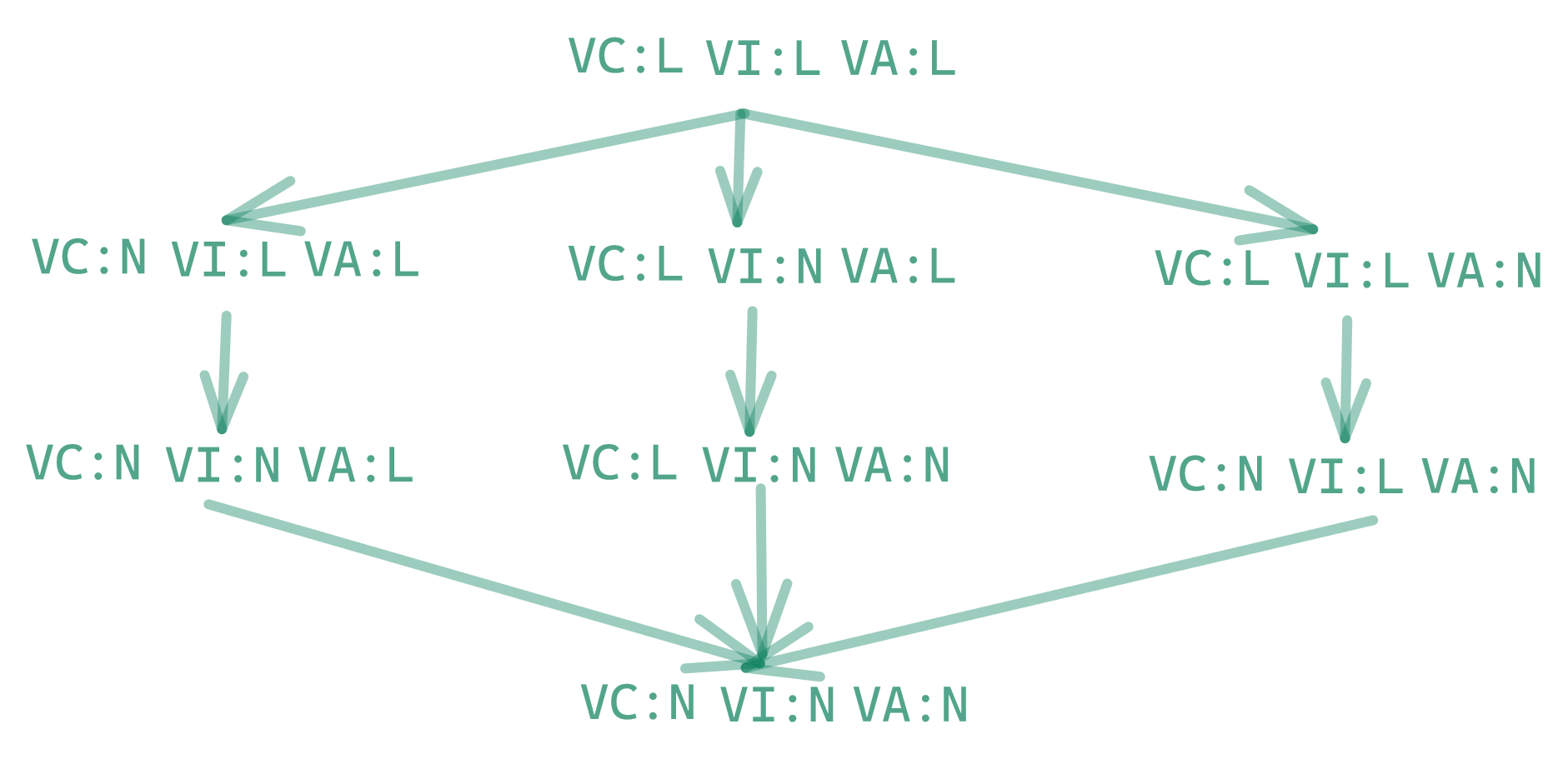

4. **VEX-first decisioning:** Exploitability modeled in OpenVEX with lattice logic for stable outcomes

|

||||

|

||||

### Implementation Guidelines

|

||||

|

||||

- Follow .NET 10 and Angular v17 best practices

|

||||

- Maximise reuse and composability

|

||||

- Never regress determinism, ordering, or precedence

|

||||

- Every change must be accompanied by or covered by tests

|

||||

- Gated LLM usage (only where explicitly configured)

|

||||

|

||||

### Test Layout

|

||||

|

||||

- Module tests: `StellaOps.<Module>.<Component>.Tests`

|

||||

- Shared fixtures/harnesses: `StellaOps.<Module>.Testing`

|

||||

- Tests use xUnit, Mongo2Go for MongoDB integration tests

|

||||

|

||||

### Documentation Updates

|

||||

|

||||

When scope, contracts, or workflows change, update the relevant docs under:

|

||||

- `docs/modules/**` - Module architecture dossiers

|

||||

- `docs/api/` - API documentation

|

||||

- `docs/risk/` - Risk documentation

|

||||

- `docs/airgap/` - Air-gap operation docs

|

||||

|

||||

## Role-Based Behavior

|

||||

|

||||

When working in this repository, behavior changes based on the role specified:

|

||||

|

||||

### As Implementer (Default for coding tasks)

|

||||

|

||||

- Work only inside the module's directory defined by the sprint's "Working directory"

|

||||

- Cross-module edits require explicit notes in commit/PR descriptions

|

||||

- Do **not** ask clarification questions - if ambiguity exists:

|

||||

- Mark the task as `BLOCKED` in the sprint `Delivery Tracker`

|

||||

- Add a note in `Decisions & Risks` describing the issue

|

||||

- Skip to the next unblocked task

|

||||

- Maintain status tracking: `TODO → DOING → DONE/BLOCKED` in sprint files

|

||||

- Read the module's `AGENTS.md` before coding in that module

|

||||

|

||||

### As Project Manager

|

||||

|

||||

- Sprint files follow format: `SPRINT_<IMPLID>_<BATCHID>_<SPRINTID>_<topic>.md`

|

||||

- IMPLID epochs: `1000` basic libraries, `2000` ingestion, `3000` backend services, `4000` CLI/UI, `5000` docs, `6000` marketing

|

||||

- Normalize sprint files to standard template while preserving content

|

||||

- Ensure module `AGENTS.md` files exist and are up to date

|

||||

|

||||

### As Product Manager

|

||||

|

||||

- Review advisories in `docs/product-advisories/`

|

||||

- Check for overlaps with `docs/product-advisories/archived/`

|

||||

- Validate against module docs and existing implementations

|

||||

- Hand over to project manager role for sprint/task definition

|

||||

|

||||

## Task Workflow

|

||||

|

||||

### Status Discipline

|

||||

|

||||

Always update task status in `docs/implplan/SPRINT_*.md`:

|

||||

- `TODO` - Not started

|

||||

- `DOING` - In progress

|

||||

- `DONE` - Completed

|

||||

- `BLOCKED` - Waiting on decision/clarification

|

||||

|

||||

### Prerequisites

|

||||

|

||||

Before coding, confirm required docs are read:

|

||||

- `docs/README.md`

|

||||

- `docs/07_HIGH_LEVEL_ARCHITECTURE.md`

|

||||

- `docs/modules/platform/architecture-overview.md`

|

||||

- Relevant module dossier (e.g., `docs/modules/<module>/architecture.md`)

|

||||

- Module-specific `AGENTS.md` file

|

||||

|

||||

### Git Rules

|

||||

|

||||

- Never use `git reset` unless explicitly told to do so

|

||||

- Never skip hooks (--no-verify, --no-gpg-sign) unless explicitly requested

|

||||

|

||||

## Configuration

|

||||

|

||||

- **Sample configs:** `etc/concelier.yaml.sample`, `etc/authority.yaml.sample`

|

||||

- **Plugin manifests:** `etc/authority.plugins/*.yaml`

|

||||

- **NuGet sources:** Curated packages in `local-nugets/`, public sources configured in `Directory.Build.props`

|

||||

|

||||

## Documentation

|

||||

|

||||

- **Architecture overview:** `docs/07_HIGH_LEVEL_ARCHITECTURE.md`

|

||||

- **Module dossiers:** `docs/modules/<module>/architecture.md`

|

||||

- **API/CLI reference:** `docs/09_API_CLI_REFERENCE.md`

|

||||

- **Offline operation:** `docs/24_OFFLINE_KIT.md`

|

||||

- **Quickstart:** `docs/10_CONCELIER_CLI_QUICKSTART.md`

|

||||

- **Sprint planning:** `docs/implplan/SPRINT_*.md`

|

||||

|

||||

## CI/CD

|

||||

|

||||

Workflows are in `.gitea/workflows/`. Key workflows:

|

||||

- `build-test-deploy.yml` - Main build, test, and deployment pipeline

|

||||

- `cli-build.yml` - CLI multi-platform builds

|

||||

- `scanner-determinism.yml` - Scanner output reproducibility tests

|

||||

- `policy-lint.yml` - Policy validation

|

||||

|

||||

## Environment Variables

|

||||

|

||||

- `STELLAOPS_BACKEND_URL` - Backend API URL for CLI

|

||||

- `STELLAOPS_TEST_MONGO_URI` - MongoDB connection string for integration tests

|

||||

- `StellaOpsEnableCryptoPro` - Enable GOST crypto support (set to `true` in build)

|

||||

@@ -21,22 +21,33 @@

|

||||

| 1 | POLICY-ENGINE-80-002 | TODO | Depends on 80-001. | Policy · Storage Guild / `src/Policy/StellaOps.Policy.Engine` | Join reachability facts + Redis caches. |

|

||||

| 2 | POLICY-ENGINE-80-003 | TODO | Depends on 80-002. | Policy · Policy Editor Guild / `src/Policy/StellaOps.Policy.Engine` | SPL predicates/actions reference reachability. |

|

||||

| 3 | POLICY-ENGINE-80-004 | TODO | Depends on 80-003. | Policy · Observability Guild / `src/Policy/StellaOps.Policy.Engine` | Metrics/traces for signals usage. |

|

||||

| 4 | POLICY-OBS-50-001 | TODO | — | Policy · Observability Guild / `src/Policy/StellaOps.Policy.Engine` | Telemetry core for API/worker hosts. |

|

||||

| 5 | POLICY-OBS-51-001 | TODO | Depends on 50-001. | Policy · DevOps Guild / `src/Policy/StellaOps.Policy.Engine` | Golden-signal metrics + SLOs. |

|

||||

| 6 | POLICY-OBS-52-001 | TODO | Depends on 51-001. | Policy Guild / `src/Policy/StellaOps.Policy.Engine` | Timeline events for evaluate/decision flows. |

|

||||

| 7 | POLICY-OBS-53-001 | TODO | Depends on 52-001. | Policy · Evidence Locker Guild / `src/Policy/StellaOps.Policy.Engine` | Evaluation evidence bundles + manifests. |

|

||||

| 8 | POLICY-OBS-54-001 | TODO | Depends on 53-001. | Policy · Provenance Guild / `src/Policy/StellaOps.Policy.Engine` | DSSE attestations for evaluations. |

|

||||

| 9 | POLICY-OBS-55-001 | TODO | Depends on 54-001. | Policy · DevOps Guild / `src/Policy/StellaOps.Policy.Engine` | Incident mode sampling overrides. |

|

||||

| 4 | POLICY-OBS-50-001 | DONE (2025-11-27) | — | Policy · Observability Guild / `src/Policy/StellaOps.Policy.Engine` | Telemetry core for API/worker hosts. |

|

||||

| 5 | POLICY-OBS-51-001 | DONE (2025-11-27) | Depends on 50-001. | Policy · DevOps Guild / `src/Policy/StellaOps.Policy.Engine` | Golden-signal metrics + SLOs. |

|

||||

| 6 | POLICY-OBS-52-001 | DONE (2025-11-27) | Depends on 51-001. | Policy Guild / `src/Policy/StellaOps.Policy.Engine` | Timeline events for evaluate/decision flows. |

|

||||

| 7 | POLICY-OBS-53-001 | DONE (2025-11-27) | Depends on 52-001. | Policy · Evidence Locker Guild / `src/Policy/StellaOps.Policy.Engine` | Evaluation evidence bundles + manifests. |

|

||||

| 8 | POLICY-OBS-54-001 | DONE (2025-11-27) | Depends on 53-001. | Policy · Provenance Guild / `src/Policy/StellaOps.Policy.Engine` | DSSE attestations for evaluations. |

|

||||

| 9 | POLICY-OBS-55-001 | DONE (2025-11-27) | Depends on 54-001. | Policy · DevOps Guild / `src/Policy/StellaOps.Policy.Engine` | Incident mode sampling overrides. |

|

||||

| 10 | POLICY-RISK-66-001 | DONE (2025-11-22) | PREP-POLICY-RISK-66-001-RISKPROFILE-LIBRARY-S | Risk Profile Schema Guild / `src/Policy/StellaOps.Policy.RiskProfile` | RiskProfile JSON schema + validator stubs. |

|

||||

| 11 | POLICY-RISK-66-002 | TODO | Depends on 66-001. | Risk Profile Schema Guild / `src/Policy/StellaOps.Policy.RiskProfile` | Inheritance/merge + deterministic hashing. |

|

||||

| 12 | POLICY-RISK-66-003 | TODO | Depends on 66-002. | Policy · Risk Profile Schema Guild / `src/Policy/StellaOps.Policy.Engine` | Integrate RiskProfile into Policy Engine config. |

|

||||

| 13 | POLICY-RISK-66-004 | TODO | Depends on 66-003. | Policy · Risk Profile Schema Guild / `src/Policy/__Libraries/StellaOps.Policy` | Load/save RiskProfiles; validation diagnostics. |

|

||||

| 14 | POLICY-RISK-67-001 | TODO | Depends on 66-004. | Policy · Risk Engine Guild / `src/Policy/StellaOps.Policy.Engine` | Trigger scoring jobs on new/updated findings. |

|

||||

| 15 | POLICY-RISK-67-001 | TODO | Depends on 67-001. | Risk Profile Schema Guild · Policy Engine Guild / `src/Policy/StellaOps.Policy.RiskProfile` | Profile storage/versioning lifecycle. |

|

||||

| 11 | POLICY-RISK-66-002 | DONE (2025-11-27) | Depends on 66-001. | Risk Profile Schema Guild / `src/Policy/StellaOps.Policy.RiskProfile` | Inheritance/merge + deterministic hashing. |

|

||||

| 12 | POLICY-RISK-66-003 | DONE (2025-11-27) | Depends on 66-002. | Policy · Risk Profile Schema Guild / `src/Policy/StellaOps.Policy.Engine` | Integrate RiskProfile into Policy Engine config. |

|

||||

| 13 | POLICY-RISK-66-004 | DONE (2025-11-27) | Depends on 66-003. | Policy · Risk Profile Schema Guild / `src/Policy/__Libraries/StellaOps.Policy` | Load/save RiskProfiles; validation diagnostics. |

|

||||

| 14 | POLICY-RISK-67-001 | DONE (2025-11-27) | Depends on 66-004. | Policy · Risk Engine Guild / `src/Policy/StellaOps.Policy.Engine` | Trigger scoring jobs on new/updated findings. |

|

||||

| 15 | POLICY-RISK-67-001 | DONE (2025-11-27) | Depends on 67-001. | Risk Profile Schema Guild · Policy Engine Guild / `src/Policy/StellaOps.Policy.RiskProfile` | Profile storage/versioning lifecycle. |

|

||||

|

||||

## Execution Log

|

||||

| Date (UTC) | Update | Owner |

|

||||

| --- | --- | --- |

|

||||

| 2025-11-27 | `POLICY-RISK-67-001` (task 15): Created `Lifecycle/RiskProfileLifecycle.cs` with lifecycle models (RiskProfileLifecycleStatus enum: Draft/Active/Deprecated/Archived, RiskProfileVersionInfo, RiskProfileLifecycleEvent, RiskProfileVersionComparison, RiskProfileChange). Created `RiskProfileLifecycleService` with status transitions (CreateVersion, Activate, Deprecate, Archive, Restore), version management, event recording, and version comparison (detecting breaking changes in signals/inheritance). | Implementer |

|

||||

| 2025-11-27 | `POLICY-RISK-67-001`: Created `Scoring/RiskScoringModels.cs` with FindingChangedEvent, RiskScoringJobRequest, RiskScoringJob, RiskScoringResult models and enums. Created `IRiskScoringJobStore` interface and `InMemoryRiskScoringJobStore` for job persistence. Created `RiskScoringTriggerService` handling FindingChangedEvent triggers with deduplication, batch processing, priority calculation, and job creation. Added risk scoring metrics to PolicyEngineTelemetry (jobs_created, triggers_skipped, duration, findings_scored). Registered services in Program.cs DI. | Implementer |

|

||||

| 2025-11-27 | `POLICY-RISK-66-004`: Added RiskProfile project reference to StellaOps.Policy library. Created `IRiskProfileRepository` interface with GetAsync, GetVersionAsync, GetLatestAsync, ListProfileIdsAsync, ListVersionsAsync, SaveAsync, DeleteVersionAsync, DeleteAllVersionsAsync, ExistsAsync. Created `InMemoryRiskProfileRepository` for testing/development. Created `RiskProfileDiagnostics` with comprehensive validation (RISK001-RISK050 error codes) covering structure, signals, weights, overrides, and inheritance. Includes `RiskProfileDiagnosticsReport` and `RiskProfileIssue` types. | Implementer |

|

||||

| 2025-11-27 | `POLICY-RISK-66-003`: Added RiskProfile project reference to Policy Engine. Created `PolicyEngineRiskProfileOptions` with config for enabled, defaultProfileId, profileDirectory, maxInheritanceDepth, validateOnLoad, cacheResolvedProfiles, and inline profile definitions. Created `RiskProfileConfigurationService` for loading profiles from config/files, resolving inheritance, and providing profiles to engine. Updated `PolicyEngineBootstrapWorker` to load profiles at startup. Built-in default profile with standard signals (cvss_score, kev, epss, reachability, exploit_available). | Implementer |

|

||||

| 2025-11-27 | `POLICY-RISK-66-002`: Created `Models/RiskProfileModel.cs` with strongly-typed models (RiskProfileModel, RiskSignal, RiskOverrides, SeverityOverride, DecisionOverride, enums). Created `Merge/RiskProfileMergeService.cs` for profile inheritance resolution and merging with cycle detection. Created `Hashing/RiskProfileHasher.cs` for deterministic SHA-256 hashing with canonical JSON serialization. | Implementer |

|

||||

| 2025-11-27 | `POLICY-OBS-55-001`: Created `IncidentMode.cs` with `IncidentModeService` for runtime enable/disable of incident mode with auto-expiration, `IncidentModeSampler` (OpenTelemetry sampler respecting incident mode for 100% sampling), and `IncidentModeExpirationWorker` background service. Added `IncidentMode` option to telemetry config. Registered in Program.cs DI. | Implementer |

|

||||

| 2025-11-27 | `POLICY-OBS-54-001`: Created `PolicyEvaluationAttestation.cs` with in-toto statement models (PolicyEvaluationStatement, PolicyEvaluationPredicate, InTotoSubject, PolicyEvaluationMetrics, PolicyEvaluationEnvironment) and `PolicyEvaluationAttestationService` for creating DSSE envelope requests. Added Attestor.Envelope project reference. Registered in Program.cs DI. | Implementer |

|

||||

| 2025-11-27 | `POLICY-OBS-53-001`: Created `EvidenceBundle.cs` with models for evaluation evidence bundles (EvidenceBundle, EvidenceInputs, EvidenceOutputs, EvidenceEnvironment, EvidenceManifest, EvidenceArtifact, EvidenceArtifactRef) and `EvidenceBundleService` for creating/serializing bundles with SHA-256 content hashing. Registered in Program.cs DI. | Implementer |

|

||||

| 2025-11-27 | `POLICY-OBS-52-001`: Created `PolicyTimelineEvents.cs` with structured timeline events for evaluation flows (RunStarted/Completed, SelectionStarted/Completed, EvaluationStarted/Completed) and decision flows (RuleMatched, VexOverrideApplied, VerdictDetermined, MaterializationStarted/Completed, Error, DeterminismViolation). Events include trace correlation and structured data. Registered in Program.cs DI. | Implementer |

|

||||

| 2025-11-27 | `POLICY-OBS-51-001`: Added golden-signal metrics (Latency: `policy_api_latency_seconds`, `policy_evaluation_latency_seconds`; Traffic: `policy_requests_total`, `policy_evaluations_total`, `policy_findings_materialized_total`; Errors: `policy_errors_total`, `policy_api_errors_total`, `policy_evaluation_failures_total`; Saturation: `policy_concurrent_evaluations`, `policy_worker_utilization`) and SLO metrics (`policy_slo_burn_rate`, `policy_error_budget_remaining`, `policy_slo_violations_total`). | Implementer |

|

||||

| 2025-11-27 | `POLICY-OBS-50-001`: Implemented telemetry core for Policy Engine. Added `PolicyEngineTelemetry.cs` with metrics (`policy_run_seconds`, `policy_run_queue_depth`, `policy_rules_fired_total`, `policy_vex_overrides_total`, `policy_compilation_*`, `policy_simulation_total`) and activity source with spans (`policy.select`, `policy.evaluate`, `policy.materialize`, `policy.simulate`, `policy.compile`). Created `TelemetryExtensions.cs` with OpenTelemetry + Serilog configuration. Wired into `Program.cs`. | Implementer |

|

||||

| 2025-11-20 | Published risk profile library prep (docs/modules/policy/prep/2025-11-20-riskprofile-66-001-prep.md); set PREP-POLICY-RISK-66-001 to DOING. | Project Mgmt |

|

||||

| 2025-11-19 | Assigned PREP owners/dates; see Delivery Tracker. | Planning |

|

||||

| 2025-11-08 | Sprint stub; awaiting upstream phases. | Planning |

|

||||

|

||||

@@ -17,8 +17,8 @@

|

||||

## Delivery Tracker

|

||||

| # | Task ID & handle | State | Key dependency / next step | Owners | Task Definition |

|

||||

| --- | --- | --- | --- | --- | --- |

|

||||

| 1 | POLICY-RISK-67-002 | BLOCKED (2025-11-26) | Await risk profile contract + schema (67-001) and API shape. | Policy Guild / `src/Policy/StellaOps.Policy.Engine` | Risk profile lifecycle APIs. |

|

||||

| 2 | POLICY-RISK-67-002 | BLOCKED (2025-11-26) | Depends on 67-001/67-002 spec; schema draft absent. | Risk Profile Schema Guild / `src/Policy/StellaOps.Policy.RiskProfile` | Publish `.well-known/risk-profile-schema` + CLI validation. |

|

||||

| 1 | POLICY-RISK-67-002 | DONE (2025-11-27) | — | Policy Guild / `src/Policy/StellaOps.Policy.Engine` | Risk profile lifecycle APIs. |

|

||||

| 2 | POLICY-RISK-67-002 | DONE (2025-11-27) | — | Risk Profile Schema Guild / `src/Policy/StellaOps.Policy.RiskProfile` | Publish `.well-known/risk-profile-schema` + CLI validation. |

|

||||

| 3 | POLICY-RISK-67-003 | BLOCKED (2025-11-26) | Blocked by 67-002 contract + simulation inputs. | Policy · Risk Engine Guild / `src/Policy/__Libraries/StellaOps.Policy` | Risk simulations + breakdowns. |

|

||||

| 4 | POLICY-RISK-68-001 | BLOCKED (2025-11-26) | Blocked by 67-003 outputs and missing Policy Studio contract. | Policy · Policy Studio Guild / `src/Policy/StellaOps.Policy.Engine` | Simulation API for Policy Studio. |

|

||||

| 5 | POLICY-RISK-68-001 | BLOCKED (2025-11-26) | Blocked until 68-001 API + Authority attachment rules defined. | Risk Profile Schema Guild · Authority Guild / `src/Policy/StellaOps.Policy.RiskProfile` | Scope selectors, precedence rules, Authority attachment. |

|

||||

@@ -31,11 +31,13 @@

|

||||

| 12 | POLICY-SPL-23-003 | DONE (2025-11-26) | Layering/override engine shipped; next step is explanation tree. | Policy Guild / `src/Policy/__Libraries/StellaOps.Policy` | Layering/override engine + tests. |

|

||||

| 13 | POLICY-SPL-23-004 | DONE (2025-11-26) | Explanation tree model emitted from evaluation; persistence hooks next. | Policy · Audit Guild / `src/Policy/__Libraries/StellaOps.Policy` | Explanation tree model + persistence. |

|

||||

| 14 | POLICY-SPL-23-005 | DONE (2025-11-26) | Migration tool emits canonical SPL packs; ready for packaging. | Policy · DevEx Guild / `src/Policy/__Libraries/StellaOps.Policy` | Migration tool to baseline SPL packs. |

|

||||

| 15 | POLICY-SPL-24-001 | TODO | Depends on 23-005. | Policy · Signals Guild / `src/Policy/__Libraries/StellaOps.Policy` | Extend SPL with reachability/exploitability predicates. |

|

||||

| 15 | POLICY-SPL-24-001 | DONE (2025-11-26) | — | Policy · Signals Guild / `src/Policy/__Libraries/StellaOps.Policy` | Extend SPL with reachability/exploitability predicates. |

|

||||

|

||||

## Execution Log

|

||||

| Date (UTC) | Update | Owner |

|

||||

| --- | --- | --- |

|

||||

| 2025-11-27 | `POLICY-RISK-67-002` (task 2): Added `RiskProfileSchemaEndpoints.cs` with `/.well-known/risk-profile-schema` endpoint (anonymous, ETag/Cache-Control, schema v1) and `/api/risk/schema/validate` POST endpoint for profile validation. Extended `RiskProfileSchemaProvider` with GetSchemaText(), GetSchemaVersion(), and GetETag() methods. Added `risk-profile` CLI command group with `validate` (--input, --format, --output, --strict) and `schema` (--output) subcommands. Added RiskProfile project reference to CLI. | Implementer |

|

||||

| 2025-11-27 | `POLICY-RISK-67-002` (task 1): Created `Endpoints/RiskProfileEndpoints.cs` with REST APIs for profile lifecycle management: ListProfiles, GetProfile, ListVersions, GetVersion, CreateProfile (draft), ActivateProfile, DeprecateProfile, ArchiveProfile, GetProfileEvents, CompareProfiles, GetProfileHash. Uses `RiskProfileLifecycleService` for status transitions and `RiskProfileConfigurationService` for profile storage/hashing. Authorization via StellaOpsScopes (PolicyRead/PolicyEdit/PolicyActivate). Registered `RiskProfileLifecycleService` in DI and wired up `MapRiskProfiles()` in Program.cs. | Implementer |

|

||||

| 2025-11-25 | Delivered SPL v1 schema + sample fixtures (spl-schema@1.json, spl-sample@1.json, SplSchemaResource) and embedded in `StellaOps.Policy`; marked POLICY-SPL-23-001 DONE. | Implementer |

|

||||

| 2025-11-26 | Implemented SPL canonicalizer + SHA-256 digest (order-stable statements/actions/conditions) with unit tests; marked POLICY-SPL-23-002 DONE. | Implementer |

|

||||

| 2025-11-26 | Added SPL layering/override engine with merge semantics (overlay precedence, metadata merge, deterministic output) and unit tests; marked POLICY-SPL-23-003 DONE. | Implementer |

|

||||

|

||||

@@ -35,7 +35,7 @@

|

||||

| 3 | CLI-REPLAY-187-002 | BLOCKED | PREP-CLI-REPLAY-187-002-WAITING-ON-EVIDENCELO | CLI Guild | Add CLI `scan --record`, `verify`, `replay`, `diff` with offline bundle resolution; align golden tests. |

|

||||

| 4 | RUNBOOK-REPLAY-187-004 | BLOCKED | PREP-RUNBOOK-REPLAY-187-004-DEPENDS-ON-RETENT | Docs Guild · Ops Guild | Publish `/docs/runbooks/replay_ops.md` coverage for retention enforcement, RootPack rotation, verification drills. |

|

||||

| 5 | CRYPTO-REGISTRY-DECISION-161 | DONE | Decision recorded in `docs/security/crypto-registry-decision-2025-11-18.md`; publish contract defaults. | Security Guild · Evidence Locker Guild | Capture decision from 2025-11-18 review; emit changelog + reference implementation for downstream parity. |

|

||||

| 6 | EVID-CRYPTO-90-001 | TODO | Apply registry defaults and wire `ICryptoProviderRegistry` into EvidenceLocker paths. | Evidence Locker Guild · Security Guild | Route hashing/signing/bundle encryption through `ICryptoProviderRegistry`/`ICryptoHash` for sovereign crypto providers. |

|

||||

| 6 | EVID-CRYPTO-90-001 | DONE | Implemented; `MerkleTreeCalculator` now uses `ICryptoProviderRegistry` for sovereign crypto routing. | Evidence Locker Guild · Security Guild | Route hashing/signing/bundle encryption through `ICryptoProviderRegistry`/`ICryptoHash` for sovereign crypto providers. |

|

||||

|

||||

## Action Tracker

|

||||

| Action | Owner(s) | Due | Status |

|

||||

@@ -84,3 +84,4 @@

|

||||

| 2025-11-18 | Started EVID-OBS-54-002 with shared schema; replay/CLI remain pending ledger shape. | Implementer |

|

||||

| 2025-11-20 | Completed PREP-EVID-REPLAY-187-001, PREP-CLI-REPLAY-187-002, and PREP-RUNBOOK-REPLAY-187-004; published prep docs at `docs/modules/evidence-locker/replay-payload-contract.md`, `docs/modules/cli/guides/replay-cli-prep.md`, and `docs/runbooks/replay_ops_prep_187_004.md`. | Implementer |

|

||||

| 2025-11-20 | Added schema readiness and replay delivery prep notes for Evidence Locker Guild; see `docs/modules/evidence-locker/prep/2025-11-20-schema-readiness-blockers.md` and `.../2025-11-20-replay-delivery-sync.md`. Marked PREP-EVIDENCE-LOCKER-GUILD-BLOCKED-SCHEMAS-NO and PREP-EVIDENCE-LOCKER-GUILD-REPLAY-DELIVERY-GU DONE. | Implementer |

|

||||

| 2025-11-27 | Completed EVID-CRYPTO-90-001: Extended `ICryptoProviderRegistry` with `ContentHashing` capability and `ResolveHasher` method; created `ICryptoHasher` interface with `DefaultCryptoHasher` implementation; wired `MerkleTreeCalculator` to use crypto registry for sovereign crypto routing; added `EvidenceCryptoOptions` for algorithm/provider configuration. | Implementer |

|

||||

|

||||

@@ -20,23 +20,38 @@

|

||||

| --- | --- | --- | --- | --- | --- |

|

||||

| 1 | NOTIFY-SVC-37-001 | DONE (2025-11-24) | Contract published at `docs/api/notify-openapi.yaml` and `src/Notifier/StellaOps.Notifier/StellaOps.Notifier.WebService/openapi/notify-openapi.yaml`. | Notifications Service Guild (`src/Notifier/StellaOps.Notifier`) | Define pack approval & policy notification contract (OpenAPI schema, event payloads, resume tokens, security guidance). |

|

||||

| 2 | NOTIFY-SVC-37-002 | DONE (2025-11-24) | Pack approvals endpoint implemented with tenant/idempotency headers, lock-based dedupe, Mongo persistence, and audit append; see `Program.cs` + storage migrations. | Notifications Service Guild | Implement secure ingestion endpoint, Mongo persistence (`pack_approvals`), idempotent writes, audit trail. |

|

||||

| 3 | NOTIFY-SVC-37-003 | DOING (2025-11-24) | Pack approval templates + default channels/rule seeded via hosted seeder; validation tests added (`PackApprovalTemplateTests`, `PackApprovalTemplateSeederTests`). Next: hook dispatch/rendering. | Notifications Service Guild | Approval/policy templates, routing predicates, channel dispatch (email/webhook), localization + redaction. |

|

||||

| 3 | NOTIFY-SVC-37-003 | DONE (2025-11-27) | Dispatch/rendering layer complete: `INotifyTemplateRenderer`/`SimpleTemplateRenderer` (Handlebars-style {{variable}} + {{#each}}, sensitive key redaction), `INotifyChannelDispatcher`/`WebhookChannelDispatcher` (Slack/webhook with retry), `DeliveryDispatchWorker` (BackgroundService), DI wiring in Program.cs, options + tests. | Notifications Service Guild | Approval/policy templates, routing predicates, channel dispatch (email/webhook), localization + redaction. |

|

||||

| 4 | NOTIFY-SVC-37-004 | DONE (2025-11-24) | Test harness stabilized with in-memory stores; OpenAPI stub returns scope/etag; pack-approvals ack path exercised. | Notifications Service Guild | Acknowledgement API, Task Runner callback client, metrics for outstanding approvals, runbook updates. |

|

||||

| 5 | NOTIFY-SVC-38-002 | TODO | Depends on 37-004. | Notifications Service Guild | Channel adapters (email, chat webhook, generic webhook) with retry policies, health checks, audit logging. |

|

||||

| 6 | NOTIFY-SVC-38-003 | TODO | Depends on 38-002. | Notifications Service Guild | Template service (versioned templates, localization scaffolding) and renderer (redaction allowlists, Markdown/HTML/JSON, provenance links). |

|

||||

| 7 | NOTIFY-SVC-38-004 | TODO | Depends on 38-003. | Notifications Service Guild | REST + WS APIs (rules CRUD, templates preview, incidents list, ack) with audit logging, RBAC, live feed stream. |

|

||||

| 8 | NOTIFY-SVC-39-001 | TODO | Depends on 38-004. | Notifications Service Guild | Correlation engine with pluggable key expressions/windows, throttler, quiet hours/maintenance evaluator, incident lifecycle. |

|

||||

| 9 | NOTIFY-SVC-39-002 | TODO | Depends on 39-001. | Notifications Service Guild | Digest generator (queries, formatting) with schedule runner and distribution. |

|

||||

| 10 | NOTIFY-SVC-39-003 | TODO | Depends on 39-002. | Notifications Service Guild | Simulation engine/API to dry-run rules against historical events, returning matched actions with explanations. |

|

||||

| 11 | NOTIFY-SVC-39-004 | TODO | Depends on 39-003. | Notifications Service Guild | Quiet hour calendars + default throttles with audit logging and operator overrides. |

|

||||

| 12 | NOTIFY-SVC-40-001 | TODO | Depends on 39-004. | Notifications Service Guild | Escalations + on-call schedules, ack bridge, PagerDuty/OpsGenie adapters, CLI/in-app inbox channels. |

|

||||

| 13 | NOTIFY-SVC-40-002 | TODO | Depends on 40-001. | Notifications Service Guild | Summary storm breaker notifications, localization bundles, fallback handling. |

|

||||

| 14 | NOTIFY-SVC-40-003 | TODO | Depends on 40-002. | Notifications Service Guild | Security hardening: signed ack links (KMS), webhook HMAC/IP allowlists, tenant isolation fuzz tests, HTML sanitization. |

|

||||

| 15 | NOTIFY-SVC-40-004 | TODO | Depends on 40-003. | Notifications Service Guild | Observability (metrics/traces for escalations/latency), dead-letter handling, chaos tests for channel outages, retention policies. |

|

||||

| 5 | NOTIFY-SVC-38-002 | DONE (2025-11-27) | Channel adapters complete: `IChannelAdapter`, `WebhookChannelAdapter`, `EmailChannelAdapter`, `ChatWebhookChannelAdapter` with retry policies (exponential backoff + jitter), health checks, audit logging, HMAC signing, `ChannelAdapterFactory` DI registration. Tests at `StellaOps.Notifier.Tests/Channels/`. | Notifications Service Guild | Channel adapters (email, chat webhook, generic webhook) with retry policies, health checks, audit logging. |

|

||||

| 6 | NOTIFY-SVC-38-003 | DONE (2025-11-27) | Template service complete: `INotifyTemplateService`/`NotifyTemplateService` (locale fallback chain, versioning, CRUD with audit), `EnhancedTemplateRenderer` (configurable redaction allowlists/denylists, Markdown/HTML/JSON/PlainText format conversion, provenance links, {{#if}} conditionals, format specifiers), `TemplateRendererOptions`, DI registration via `AddTemplateServices()`. Tests at `StellaOps.Notifier.Tests/Templates/`. | Notifications Service Guild | Template service (versioned templates, localization scaffolding) and renderer (redaction allowlists, Markdown/HTML/JSON, provenance links). |

|

||||

| 7 | NOTIFY-SVC-38-004 | DONE (2025-11-27) | REST APIs complete: `/api/v2/notify/rules` (CRUD), `/api/v2/notify/templates` (CRUD + preview + validate), `/api/v2/notify/incidents` (list + ack + resolve). Contract DTOs at `Contracts/RuleContracts.cs`, `TemplateContracts.cs`, `IncidentContracts.cs`. Endpoints via `MapNotifyApiV2()` extension. Audit logging on all mutations. Tests at `StellaOps.Notifier.Tests/Endpoints/`. | Notifications Service Guild | REST + WS APIs (rules CRUD, templates preview, incidents list, ack) with audit logging, RBAC, live feed stream. |

|

||||

| 8 | NOTIFY-SVC-39-001 | DONE (2025-11-27) | Correlation engine complete: `ICorrelationEngine`/`CorrelationEngine` (orchestrates key building, incident management, throttling, quiet hours), `ICorrelationKeyBuilder` interface with `CompositeCorrelationKeyBuilder` (tenant+kind+payload fields), `TemplateCorrelationKeyBuilder` (template expressions), `CorrelationKeyBuilderFactory`. `INotifyThrottler`/`InMemoryNotifyThrottler` (sliding window throttling). `IQuietHoursEvaluator`/`QuietHoursEvaluator` (quiet hours schedules, maintenance windows). `IIncidentManager`/`InMemoryIncidentManager` (incident lifecycle: open/acknowledged/resolved). Notification policies (FirstOnly, EveryEvent, OnEscalation, Periodic). DI registration via `AddCorrelationServices()`. Comprehensive tests at `StellaOps.Notifier.Tests/Correlation/`. | Notifications Service Guild | Correlation engine with pluggable key expressions/windows, throttler, quiet hours/maintenance evaluator, incident lifecycle. |

|

||||

| 9 | NOTIFY-SVC-39-002 | DONE (2025-11-27) | Digest generator complete: `IDigestGenerator`/`DigestGenerator` (queries incidents, calculates summary statistics, builds timeline, renders to Markdown/HTML/PlainText/JSON), `IDigestScheduler`/`InMemoryDigestScheduler` (cron-based scheduling with Cronos, timezone support, next-run calculation), `DigestScheduleRunner` BackgroundService (concurrent schedule execution with semaphore limiting), `IDigestDistributor`/`DigestDistributor` (webhook/Slack/Teams/email distribution with format-specific payloads). DTOs: `DigestQuery`, `DigestContent`, `DigestSummary`, `DigestIncident`, `EventKindSummary`, `TimelineEntry`, `DigestSchedule`, `DigestRecipient`. DI registration via `AddDigestServices()` with `DigestServiceBuilder`. Tests at `StellaOps.Notifier.Tests/Digest/`. | Notifications Service Guild | Digest generator (queries, formatting) with schedule runner and distribution. |

|

||||

| 10 | NOTIFY-SVC-39-003 | DONE (2025-11-27) | Simulation engine complete: `ISimulationEngine`/`SimulationEngine` (dry-runs rules against events without side effects, evaluates all rules against all events, builds detailed match/non-match explanations), `SimulationRequest`/`SimulationResult` DTOs with `SimulationEventResult`, `SimulationRuleMatch`, `SimulationActionMatch`, `SimulationRuleNonMatch`, `SimulationRuleSummary`. Rule validation via `ValidateRuleAsync` with error/warning detection (missing fields, broad matches, unknown severities, disabled actions). API endpoint at `/api/v2/simulate` (POST for simulation, POST /validate for rule validation) via `SimulationEndpoints.cs`. DI registration via `AddSimulationServices()`. Tests at `StellaOps.Notifier.Tests/Simulation/SimulationEngineTests.cs`. | Notifications Service Guild | Simulation engine/API to dry-run rules against historical events, returning matched actions with explanations. |

|

||||

| 11 | NOTIFY-SVC-39-004 | DONE (2025-11-27) | Quiet hour calendars, throttle configs, audit logging, and operator overrides implemented. | Notifications Service Guild | Quiet hour calendars + default throttles with audit logging and operator overrides. |

|

||||

| 12 | NOTIFY-SVC-40-001 | DONE (2025-11-27) | Escalation and on-call systems complete. | Notifications Service Guild | Escalations + on-call schedules, ack bridge, PagerDuty/OpsGenie adapters, CLI/in-app inbox channels. |

|

||||

| 13 | NOTIFY-SVC-40-002 | DONE (2025-11-27) | Storm breaker, localization, and fallback services complete. | Notifications Service Guild | Summary storm breaker notifications, localization bundles, fallback handling. |

|

||||

| 14 | NOTIFY-SVC-40-003 | DONE (2025-11-27) | Security services complete. | Notifications Service Guild | Security hardening: signed ack links (KMS), webhook HMAC/IP allowlists, tenant isolation fuzz tests, HTML sanitization. |

|

||||

| 15 | NOTIFY-SVC-40-004 | DONE (2025-11-27) | Observability stack complete. | Notifications Service Guild | Observability (metrics/traces for escalations/latency), dead-letter handling, chaos tests for channel outages, retention policies. |

|

||||

|

||||

## Execution Log

|

||||

| Date (UTC) | Update | Owner |

|

||||

| --- | --- | --- |

|

||||

| 2025-11-27 | Completed observability and chaos tests (NOTIFY-SVC-40-004): Implemented comprehensive observability stack for the Notifier module. **Metrics Service** (`INotifierMetrics`/`DefaultNotifierMetrics`): Uses System.Diagnostics.Metrics API with counters for delivery attempts, escalations, storm events, fallbacks, dead-letters; histograms for delivery latency, acknowledgment latency; observable gauges for active escalations/storms/pending deliveries. `NotifierMetricsSnapshot` provides point-in-time metrics with tenant filtering. Configuration via `NotifierMetricsOptions` (Enabled, MeterName, SamplingInterval, HistogramBuckets). **Tracing Service** (`INotifierTracing`/`DefaultNotifierTracing`): Uses System.Diagnostics.Activity API (OpenTelemetry compatible) for distributed tracing. Span types: delivery, escalation, digest, template render, correlation, webhook validation. Helper methods: `AddEvent()`, `SetError()`, `SetOk()`, `AddTags()`, `StartLinkedSpan()`. Extension methods for recording delivery results, escalation levels, storm detection, fallbacks, template renders, correlation results. Configuration via `NotifierTracingOptions` (Enabled, SourceName, IncludeSensitiveData, SamplingRatio, MaxAttributesPerSpan, MaxEventsPerSpan). **Dead Letter Handler** (`IDeadLetterHandler`/`InMemoryDeadLetterHandler`): Queue for failed notifications with entry lifecycle (Pending→PendingRetry→Retried, Discarded). Operations: `DeadLetterAsync()`, `RetryAsync()` (with retry limits), `DiscardAsync()`, `GetEntriesAsync()` (with status/channel filtering, pagination), `GetStatisticsAsync()` (totals, breakdown by channel/reason), `PurgeAsync()` (cleanup old entries). Observer pattern via `Subscribe()`/`IDeadLetterObserver` for real-time notifications. Configuration via `DeadLetterOptions` (Enabled, MaxRetries, RetryDelay, MaxEntriesPerTenant). **Chaos Test Runner** (`IChaosTestRunner`/`InMemoryChaosTestRunner`): Fault injection framework for resilience testing. Fault types: Outage (complete failure), PartialFailure (percentage-based), Latency (delay injection), Intermittent (random failures), RateLimit (throttling), Timeout, ErrorResponse (specific HTTP codes), CorruptResponse. Experiment lifecycle: create, start, stop, cleanup. `ShouldFailAsync()` checks active experiments and returns `ChaosDecision` with fault details. Outcome recording and statistics. Configuration via `ChaosTestOptions` (Enabled, MaxConcurrentExperiments, MaxExperimentDuration, RequireTenantTarget, AllowedInitiators). **Retention Policy Service** (`IRetentionPolicyService`/`InMemoryRetentionPolicyService`): Data cleanup policies for delivery logs, escalations, storm events, dead letters, audit logs, metrics, traces, chaos experiments, isolation violations, webhook logs, template cache. Actions: Delete, Archive, Compress, FlagForReview. Features: cron-based scheduling, tenant scoping, execution history, preview before execute, pluggable `IRetentionHandler` per data type, filters by channel/status/severity/tags. Configuration via `RetentionPolicyOptions` (Enabled, DefaultRetentionPeriod, Min/MaxRetentionPeriod, DefaultBatchSize, ExecutionHistoryRetention, DefaultPeriods per data type). **REST APIs** via `ObservabilityEndpoints.cs`: `/api/v1/observability/metrics` (GET snapshot), `/metrics/{tenantId}` (tenant-specific), `/dead-letters/{tenantId}` (list/get/retry/discard/stats/purge), `/chaos/experiments` (list/get/start/stop/results), `/retention/policies` (CRUD/execute/preview/history). **DI Registration** via `AddNotifierObservabilityServices()`. Updated `Program.cs` with service and endpoint registration. **Tests**: `ChaosTestRunnerTests` (18 tests covering experiment lifecycle, fault types, rate limiting, expiration), `RetentionPolicyServiceTests` (16 tests covering policy CRUD, execution, preview, history), `DeadLetterHandlerTests` (16 tests covering entry lifecycle, filtering, statistics, observers). NOTIFY-SVC-40-004 marked DONE. | Implementer |

|

||||

| 2025-11-27 | Completed security hardening (NOTIFY-SVC-40-003): Implemented comprehensive security services for the Notifier module. **Signing Service** (`ISigningService`/`SigningService`): JWT-like token generation with header/body/signature structure, HMAC-SHA256 signing, Base64URL encoding, key rotation support via `ISigningKeyProvider` interface. `LocalSigningKeyProvider` for in-memory key management with retention period and automatic cleanup. Token verification with expiry checking, key lookup, and constant-time signature comparison. `SigningPayload` record with TokenId, Purpose, TenantId, Subject, Target, ExpiresAt, and custom Claims. `SigningVerificationResult` with IsValid, Payload, Error, and ErrorCode (InvalidFormat, InvalidSignature, Expired, InvalidPayload, KeyNotFound, Revoked). Configuration via `SigningServiceOptions` (KeyProvider type, LocalSigningKey, Algorithm, DefaultExpiry, KeyRotationInterval, KeyRetentionPeriod, KMS/Azure/GCP URLs for future cloud provider support). **Webhook Security Service** (`IWebhookSecurityService`/`InMemoryWebhookSecurityService`): HMAC signature validation (SHA256/SHA384/SHA512), configurable signature formats (hex/base64/base64url) with optional prefixes (e.g., "sha256=" for Slack), IP allowlist with CIDR subnet matching, replay protection with nonce caching and timestamp validation, known provider IP ranges for Slack/GitHub/PagerDuty. `WebhookSecurityConfig` record with ConfigId, TenantId, ChannelId, SecretKey, Algorithm, SignatureHeader, SignatureFormat, TimestampHeader, MaxRequestAge, AllowedIps, RequireSignature. `WebhookValidationResult` with IsValid, Errors, Warnings, PassedChecks, FailedChecks flags (SignatureValid, IpAllowed, NotExpired, NotReplay). **HTML Sanitizer** (`IHtmlSanitizer`/`DefaultHtmlSanitizer`): Regex-based HTML sanitization with configurable profiles (Minimal, Basic, Rich, Email). Removes script tags, event handlers (onclick, onerror, etc.), javascript: URLs. Tag/attribute allowlists with global and tag-specific rules. CSS property allowlists for style attributes. URL scheme validation (http, https, mailto, tel). Comment stripping, content length limits, nesting depth limits. `SanitizationProfile` record defining AllowedTags, AllowedAttributes, AllowedUrlSchemes, AllowedCssProperties, MaxNestingDepth, MaxContentLength. `HtmlValidationResult` with error types (DisallowedTag, DisallowedAttribute, ScriptDetected, EventHandlerDetected, JavaScriptUrlDetected). Utilities: `EscapeHtml()`, `StripTags()`. Custom profile registration. **Tenant Isolation Validator** (`ITenantIsolationValidator`/`InMemoryTenantIsolationValidator`): Resource-level tenant isolation with registration tracking. Validates access to deliveries, channels, templates, subscriptions. Admin tenant bypass patterns (regex-based). System resource type bypass. Cross-tenant access grants with operation restrictions (Read, Write, Delete, Execute, Share flags), expiration support, and auditable grant/revoke operations. Violation recording with severity levels (Low, Medium, High, Critical based on operation type). Built-in fuzz testing via `RunFuzzTestAsync()` with configurable iterations, tenant IDs, resource types, cross-tenant grant testing, and edge case testing. `TenantFuzzTestResult` with pass/fail counts, execution time, and detailed failure information. **REST APIs** via `SecurityEndpoints.cs`: `/api/v2/security/tokens/sign` (POST), `/tokens/verify` (POST), `/tokens/{token}/info` (GET), `/keys/rotate` (POST), `/webhooks` (POST register, GET config), `/webhooks/validate` (POST), `/webhooks/{tenantId}/{channelId}/allowlist` (PUT), `/html/sanitize` (POST), `/html/validate` (POST), `/html/strip` (POST), `/tenants/validate` (POST), `/tenants/{tenantId}/violations` (GET), `/tenants/fuzz-test` (POST), `/tenants/grants` (POST grant, DELETE revoke). **DI Registration** via `AddNotifierSecurityServices()` with `SecurityServiceBuilder` for in-memory or persistent providers. Options classes: `SigningServiceOptions`, `WebhookSecurityOptions`, `HtmlSanitizerOptions`, `TenantIsolationOptions`. Updated `Program.cs` with service and endpoint registration. **Tests**: `SigningServiceTests` (9 tests covering sign/verify, expiry, tampering, key rotation), `LocalSigningKeyProviderTests` (5 tests), `WebhookSecurityServiceTests` (12 tests covering HMAC validation, IP allowlists, replay protection), `HtmlSanitizerTests` (22 tests covering tag/attribute filtering, XSS prevention, profiles), `TenantIsolationValidatorTests` (17 tests covering access validation, grants, fuzz testing). NOTIFY-SVC-40-003 marked DONE. | Implementer |

|

||||

| 2025-11-27 | Completed storm breaker, localization, and fallback handling (NOTIFY-SVC-40-002): Implemented `IStormBreaker`/`InMemoryStormBreaker` for notification storm detection and consolidation (configurable thresholds per event-kind, sliding window tracking, storm state management, automatic suppression with periodic summaries, cooldown-based storm ending). Storm detection tracks event rates and consolidates high-volume notifications into summary notifications sent at configurable intervals. Created `ILocalizationService`/`InMemoryLocalizationService` for multi-locale notification content management with bundle-based storage (tenant-scoped + system bundles), locale fallback chains (e.g., de-AT → de-DE → de → en-US), named placeholder substitution with locale-aware formatting (numbers, dates), caching with configurable TTL, and seeded system bundles for en-US, de-DE, fr-FR covering storm/fallback/escalation/digest strings. Implemented `IFallbackHandler`/`InMemoryFallbackHandler` for channel fallback routing when primary channels fail (configurable fallback chains per channel type, tenant-specific chain overrides, delivery state tracking, max attempt limiting, statistics collection for success/failure/exhaustion rates). REST APIs: `/api/v2/storm-breaker/storms` (list active storms, get state, generate summary, clear), `/api/v2/localization/bundles` (CRUD, validate), `/api/v2/localization/strings/{key}` (get/format), `/api/v2/localization/locales` (list supported), `/api/v2/fallback/statistics` (get stats), `/api/v2/fallback/chains/{channelType}` (get/set), `/api/v2/fallback/test` (test resolution). Options classes: `StormBreakerOptions` (threshold, window, summary interval, cooldown, event-kind overrides), `LocalizationServiceOptions` (default locale, fallback chains, caching, placeholder format), `FallbackHandlerOptions` (max attempts, default chains, state retention, exhaustion notification). DI registration via `AddStormBreakerServices()` with `StormBreakerServiceBuilder` for custom implementations. Endpoints via `StormBreakerEndpoints.cs`, `LocalizationEndpoints.cs`, `FallbackEndpoints.cs`. Updated `Program.cs` with service and endpoint registration. Tests: `InMemoryStormBreakerTests` (14 tests covering detection, suppression, summaries, thresholds), `InMemoryLocalizationServiceTests` (17 tests covering bundles, fallback, formatting), `InMemoryFallbackHandlerTests` (15 tests covering chains, statistics, exhaustion). NOTIFY-SVC-40-002 marked DONE. | Implementer |

|

||||

| 2025-11-27 | Completed escalation and on-call schedules (NOTIFY-SVC-40-001): Implemented escalation engine (`IEscalationEngine`/`EscalationEngine`) for incident escalation with level-based notification, acknowledgment processing, cycle management (restart/repeat/stop), and timeout handling. Created `IEscalationPolicyService`/`InMemoryEscalationPolicyService` for policy CRUD (levels, targets, exhausted actions, max cycles). Implemented `IOnCallScheduleService`/`InMemoryOnCallScheduleService` for on-call schedule management with rotation layers (daily/weekly/custom), handoff times, restrictions (day-of-week, time-of-day), and override support. Created `IAckBridge`/`AckBridge` for processing acknowledgments from multiple sources (signed links, PagerDuty, OpsGenie, Slack, Teams, email, CLI, in-app) with HMAC-signed token generation and validation. Added `PagerDutyAdapter` (Events API v2 integration with dedup keys, severity mapping, trigger/acknowledge/resolve actions, webhook parsing) and `OpsGenieAdapter` (Alert API v2 integration, priority mapping, alert lifecycle, webhook parsing). Implemented `IInboxChannel`/`InAppInboxChannel`/`CliNotificationChannel` for inbox-style notifications with priority ordering, read/unread tracking, expiration handling, query filtering (type, priority, limit), and CLI formatting. Created `IExternalIntegrationAdapter` interface for bi-directional integration (create incidents, parse webhooks). REST APIs via `EscalationEndpoints.cs`: `/api/v2/escalation-policies` (CRUD), `/api/v2/oncall-schedules` (CRUD + on-call lookup + overrides), `/api/v2/escalations` (active escalation management, manual escalate/stop), `/api/v2/ack` (acknowledgment processing + PagerDuty/OpsGenie webhook endpoints). DI registration via `AddEscalationServices()`, `AddPagerDutyIntegration()`, `AddOpsGenieIntegration()`. Updated `Program.cs` with service registration and endpoint mapping. Tests: `EscalationPolicyServiceTests` (14 tests), `EscalationEngineTests` (14 tests), `AckBridgeTests` (13 tests), `InboxChannelTests` (22 tests). NOTIFY-SVC-40-001 marked DONE. | Implementer |

|

||||

| 2025-11-27 | Extended NOTIFY-SVC-39-004 with REST APIs: Added `/api/v2/quiet-hours/calendars` endpoints (`QuietHoursEndpoints.cs`) for calendar CRUD operations (list, get, create, update, delete) plus `/evaluate` for checking quiet hours status. Created `/api/v2/throttles/config` endpoints (`ThrottleEndpoints.cs`) for throttle configuration CRUD plus `/evaluate` for effective throttle duration lookup. Added `/api/v2/overrides` endpoints (`OperatorOverrideEndpoints.cs`) for override management (list, get, create, revoke) plus `/check` for checking applicable overrides. Created `IQuietHoursCalendarService`/`InMemoryQuietHoursCalendarService` (tenant calendars with named schedules, event-kind filtering, priority ordering, timezone support, overnight window handling). Created `IThrottleConfigurationService`/`InMemoryThrottleConfigurationService` (default durations, event-kind prefix matching for overrides, burst limiting). API request/response DTOs for all endpoints. DI registration via `AddQuietHoursServices()`. Endpoint mapping in `Program.cs`. Additional tests: `QuietHoursCalendarServiceTests` (15 tests covering calendar CRUD, schedule evaluation, day-of-week filtering, priority ordering), `ThrottleConfigurationServiceTests` (14 tests covering config CRUD, prefix matching, audit logging). | Implementer |

|

||||

| 2025-11-27 | Completed quiet hour calendars and default throttles (NOTIFY-SVC-39-004): implemented `IQuietHourCalendarService`/`InMemoryQuietHourCalendarService` (per-tenant calendar management, multiple named schedules per calendar, priority-based evaluation, scope/event-kind filtering, timezone support, day-of-week/specific-date scheduling). Created `IThrottleConfigService`/`InMemoryThrottleConfigService` for hierarchical throttle configuration (global → tenant → event-kind pattern matching, burst allowance, cooldown periods, wildcard/prefix patterns). Implemented `ISuppressionAuditLogger`/`InMemorySuppressionAuditLogger` (comprehensive audit logging for all suppression config changes with filtering by time/action/actor/resource). Created `IOperatorOverrideService`/`InMemoryOperatorOverrideService` (temporary overrides to bypass quiet hours/throttling/maintenance, duration limits, usage counting, expiration handling, revocation). DTOs: `QuietHourCalendar`, `CalendarSchedule`, `CalendarEvaluationResult`, `TenantThrottleConfig`, `EventKindThrottleConfig`, `EffectiveThrottleConfig`, `SuppressionAuditEntry`, `OperatorOverride`, `OverrideCheckResult`. Configuration via `SuppressionAuditOptions`, `OperatorOverrideOptions`. Updated `CorrelationServiceExtensions` with DI registration for all new services and builder methods. Tests: `QuietHourCalendarServiceTests` (14 tests), `ThrottleConfigServiceTests` (15 tests), `OperatorOverrideServiceTests` (17 tests), `SuppressionAuditLoggerTests` (11 tests). NOTIFY-SVC-39-004 marked DONE. | Implementer |

|

||||

| 2025-11-27 | Completed simulation engine (NOTIFY-SVC-39-003): implemented `ISimulationEngine`/`SimulationEngine` that evaluates rules against events without side effects. Core functionality: accepts events from request or tenant rules from repository, evaluates each event against each rule using `INotifyRuleEvaluator`, builds detailed match results with action explanations (channel availability, template assignment, throttle settings), and non-match explanations (event kind mismatch, severity below threshold, label mismatch, etc.). Created comprehensive DTOs: `SimulationRequest` (tenant, events, rules, filters, options), `SimulationResult` (totals, event results, rule summaries, duration), `SimulationEventResult`, `SimulationRuleMatch`, `SimulationActionMatch`, `SimulationRuleNonMatch`, `SimulationRuleSummary`, `NonMatchReasonSummary`. Implemented rule validation via `ValidateRuleAsync` with error detection (missing required fields) and warning detection (broad matches, unknown severities, no enabled actions, disabled rules). REST API at `/api/v2/simulate` (POST main simulation, POST /validate for rule validation) via `SimulationEndpoints.cs` with request/response mapping. DI registration via `AddSimulationServices()`. Tests: `SimulationEngineTests` (13 tests covering matching, non-matching, rule summaries, filtering, validation). NOTIFY-SVC-39-003 marked DONE. | Implementer |

|

||||

| 2025-11-27 | Completed digest generator (NOTIFY-SVC-39-002): implemented `IDigestGenerator`/`DigestGenerator` that queries incidents from `IIncidentManager`, calculates summary statistics (total/new/acknowledged/resolved counts, total events, average resolution time, median acknowledge time), builds event kind summaries with percentages, and generates activity timelines. Multi-format rendering: Markdown (tables, status badges), HTML (styled document with tables and cards), PlainText (ASCII-formatted), and JSON (serialized content). Created `IDigestScheduler`/`InMemoryDigestScheduler` for managing digest schedules with cron expressions (using Cronos library), timezone support, and automatic next-run calculation. Implemented `DigestScheduleRunner` BackgroundService with configurable check intervals and semaphore-limited concurrent execution. Created `IDigestDistributor`/`DigestDistributor` supporting webhook (JSON payload), Slack (blocks-based messages), Teams (Adaptive Cards), and email delivery. Configuration via `DigestOptions`, `DigestSchedulerOptions`, `DigestDistributorOptions`. DI registration via `AddDigestServices()` with `DigestServiceBuilder` for customization. Tests: `DigestGeneratorTests` (rendering, statistics, filtering), `DigestSchedulerTests` (scheduling, cron, timezone). NOTIFY-SVC-39-002 marked DONE. | Implementer |

|

||||

| 2025-11-27 | Completed correlation engine (NOTIFY-SVC-39-001): implemented `ICorrelationEngine`/`CorrelationEngine` that orchestrates key building, incident management, throttling, and quiet hours evaluation. Created `ICorrelationKeyBuilder` interface with `CompositeCorrelationKeyBuilder` (builds keys from tenant+kind+payload fields using SHA256 hashing) and `TemplateCorrelationKeyBuilder` (builds keys from template strings with variable substitution). Implemented `INotifyThrottler`/`InMemoryNotifyThrottler` with sliding window algorithm for rate limiting. Created `IQuietHoursEvaluator`/`QuietHoursEvaluator` supporting scheduled quiet hours (overnight windows, day-of-week filters, excluded event kinds, timezone support) and maintenance windows (tenant-scoped, event-kind filtering). Implemented `IIncidentManager`/`InMemoryIncidentManager` for incident lifecycle (Open→Acknowledged→Resolved) with correlation window support and reopen-on-new-event option. Added notification policies (FirstOnly, EveryEvent, OnEscalation, Periodic) with event count thresholds and severity escalation detection. DI registration via `AddCorrelationServices()` with `CorrelationServiceBuilder` for customization. Comprehensive test suites: `CorrelationEngineTests`, `CorrelationKeyBuilderTests`, `NotifyThrottlerTests`, `IncidentManagerTests`, `QuietHoursEvaluatorTests`. NOTIFY-SVC-39-001 marked DONE. | Implementer |

|

||||

| 2025-11-27 | Enhanced NOTIFY-SVC-38-004 with additional API paths and WebSocket support: Added simplified `/api/v2/rules`, `/api/v2/templates`, `/api/v2/incidents` endpoints (parallel to `/api/v2/notify/...` paths) via `RuleEndpoints.cs`, `TemplateEndpoints.cs`, `IncidentEndpoints.cs`. Implemented WebSocket live feed at `/api/v2/incidents/live` (`IncidentLiveFeed.cs`) with tenant-scoped subscriptions, broadcast methods (`BroadcastIncidentUpdateAsync`, `BroadcastStatsUpdateAsync`), ping/pong keep-alive, connection tracking. Fixed bug in `NotifyApiEndpoints.cs` where `ListPendingAsync` was called (method doesn't exist) - changed to use `QueryAsync`. Updated `Program.cs` to enable WebSocket middleware and map all v2 endpoints. Contract types renamed to avoid conflicts: `DeliveryAckRequest`, `DeliveryResponse`, `DeliveryStatsResponse`. | Implementer |

|

||||

| 2025-11-27 | Completed REST APIs (NOTIFY-SVC-38-004): implemented `/api/v2/notify/rules` (GET list, GET by ID, POST create, PUT update, DELETE), `/api/v2/notify/templates` (GET list, GET by ID, POST create, DELETE, POST preview, POST validate), `/api/v2/notify/incidents` (GET list, POST ack, POST resolve). Created API contract DTOs: `RuleContracts.cs` (RuleCreateRequest, RuleUpdateRequest, RuleResponse, RuleMatchRequest/Response, RuleActionRequest/Response), `TemplateContracts.cs` (TemplatePreviewRequest/Response, TemplateCreateRequest, TemplateResponse), `IncidentContracts.cs` (IncidentListQuery, IncidentResponse, IncidentListResponse, IncidentAckRequest, IncidentResolveRequest). Endpoints registered via `MapNotifyApiV2()` extension method in `NotifyApiEndpoints.cs`. All mutations include audit logging. Tests at `NotifyApiEndpointsTests.cs`. NOTIFY-SVC-38-004 marked DONE. | Implementer |

|

||||

| 2025-11-27 | Completed template service (NOTIFY-SVC-38-003): implemented `INotifyTemplateService`/`NotifyTemplateService` with locale fallback chain (exact locale → language-only → en-us default), template versioning via UpdatedAt timestamps, CRUD operations with audit logging. Created `EnhancedTemplateRenderer` with configurable redaction (safe/paranoid/none modes, allowlists/denylists), multi-format output (Markdown→HTML/PlainText conversion), provenance links, `{{#if}}` conditionals, and format specifiers (`{{var\|upper}}`, `{{var\|html}}`, etc.). Added `TemplateRendererOptions` for configuration. DI registration via `AddTemplateServices()` extension. Comprehensive test suites: `NotifyTemplateServiceTests` (14 tests) and `EnhancedTemplateRendererTests` (13 tests). NOTIFY-SVC-38-003 marked DONE. | Implementer |

|

||||

| 2025-11-27 | Completed dispatch/rendering wiring (NOTIFY-SVC-37-003): implemented `INotifyTemplateRenderer` interface with `SimpleTemplateRenderer` (Handlebars-style `{{variable}}` substitution, `{{#each}}` iteration, sensitive key redaction for secret/password/token/key/apikey/credential), `INotifyChannelDispatcher` interface with `WebhookChannelDispatcher` (Slack/Webhook/Custom channels, exponential backoff retry, max 3 attempts), `DeliveryDispatchWorker` BackgroundService for polling pending deliveries. Added `DispatchInterval`/`DispatchBatchSize` to `NotifierWorkerOptions`. DI registration in Program.cs with HttpClient configuration. Created comprehensive unit tests: `SimpleTemplateRendererTests` (9 tests covering variable substitution, nested payloads, redaction, each blocks, hashing) and `WebhookChannelDispatcherTests` (8 tests covering success/failure/retry scenarios, payload formatting). Fixed `DeliveryDispatchWorker` model compatibility with `NotifyDelivery` record (using `StatusReason`, `CompletedAt`, `Attempts` array). NOTIFY-SVC-37-003 marked DONE. | Implementer |

|

||||

| 2025-11-27 | Completed channel adapters (NOTIFY-SVC-38-002): implemented `IChannelAdapter` interface, `WebhookChannelAdapter` (HMAC signing, exponential backoff), `EmailChannelAdapter` (SMTP with SmtpClient), `ChatWebhookChannelAdapter` (Slack blocks/Teams Adaptive Cards), `ChannelAdapterOptions`, `ChannelAdapterFactory` with DI registration. Added `WebhookChannelAdapterTests`. Starting NOTIFY-SVC-38-003 (template service). | Implementer |

|

||||

| 2025-11-27 | Enhanced pack approvals contract: created formal OpenAPI 3.1 spec at `src/Notifier/.../openapi/pack-approvals.yaml`, published comprehensive contract docs at `docs/notifications/pack-approvals-contract.md` with security guidance/resume token mechanics, updated `PackApprovalAckRequest` with decision/comment/actor fields, enriched audit payloads in ack endpoint. | Implementer |

|

||||

| 2025-11-19 | Normalized sprint to standard template and renamed from `SPRINT_172_notifier_ii.md` to `SPRINT_0172_0001_0002_notifier_ii.md`; content preserved. | Implementer |

|

||||

| 2025-11-19 | Added legacy-file redirect stub to prevent divergent updates. | Implementer |

|

||||

| 2025-11-24 | Published pack-approvals ingestion contract into Notifier OpenAPI (`docs/api/notify-openapi.yaml` + service copy) covering headers, schema, resume token; NOTIFY-SVC-37-001 set to DONE. | Implementer |

|

||||

|

||||

@@ -19,7 +19,7 @@

|

||||

| # | Task ID | Status | Key dependency / next step | Owners | Task Definition |

|

||||

| --- | --- | --- | --- | --- | --- |

|

||||

| P1 | PREP-NOTIFY-TEN-48-001-NOTIFIER-II-SPRINT-017 | DONE (2025-11-22) | Due 2025-11-23 · Accountable: Notifications Service Guild (`src/Notifier/StellaOps.Notifier`) | Notifications Service Guild (`src/Notifier/StellaOps.Notifier`) | Notifier II (Sprint 0172) not started; tenancy model not finalized. <br><br> Document artefact/deliverable for NOTIFY-TEN-48-001 and publish location so downstream tasks can proceed. Prep artefact: `docs/modules/notifier/prep/2025-11-20-ten-48-001-prep.md`. |

|

||||

| 1 | NOTIFY-TEN-48-001 | BLOCKED (2025-11-20) | PREP-NOTIFY-TEN-48-001-NOTIFIER-II-SPRINT-017 | Notifications Service Guild (`src/Notifier/StellaOps.Notifier`) | Tenant-scope rules/templates/incidents, RLS on storage, tenant-prefixed channels, include tenant context in notifications. |

|

||||

| 1 | NOTIFY-TEN-48-001 | DONE | Implemented tenant scoping with RLS and channel resolution. | Notifications Service Guild (`src/Notifier/StellaOps.Notifier`) | Tenant-scope rules/templates/incidents, RLS on storage, tenant-prefixed channels, include tenant context in notifications. |

|

||||

|

||||

## Execution Log

|

||||

| Date (UTC) | Update | Owner |

|

||||

@@ -30,6 +30,8 @@

|

||||

| 2025-11-19 | Added legacy-file redirect stub to avoid divergent updates. | Implementer |

|

||||

| 2025-11-20 | Marked NOTIFY-TEN-48-001 BLOCKED pending completion of Sprint 0172 tenancy model; no executable work in this sprint today. | Implementer |

|

||||

| 2025-11-22 | Marked all PREP tasks to DONE per directive; evidence to be verified. | Project Mgmt |

|

||||

| 2025-11-27 | Implemented NOTIFY-TEN-48-001: Created ITenantContext.cs (context and accessor with AsyncLocal), TenantMiddleware.cs (HTTP tenant extraction), ITenantRlsEnforcer.cs (RLS validation with admin/system bypass), ITenantChannelResolver.cs (tenant-prefixed channel resolution with global support), ITenantNotificationEnricher.cs (payload enrichment), TenancyServiceExtensions.cs (DI registration). Updated Program.cs. Added comprehensive unit tests in Tenancy/ directory. | Implementer |

|

||||

| 2025-11-27 | Extended tenancy: Created MongoDB incident repository (INotifyIncidentRepository, NotifyIncidentRepository, NotifyIncidentDocumentMapper); added IncidentsCollection to NotifyMongoOptions; added tenant_status_lastOccurrence and tenant_correlationKey_status indexes; registered in DI. Added TenantContext.cs and TenantServiceExtensions.cs to Worker for AsyncLocal context propagation. Updated prep doc with implementation details. | Implementer |

|

||||

|

||||

## Decisions & Risks

|

||||

- Requires completion of Notifier II and established tenancy model before applying RLS.

|

||||

|

||||

@@ -25,8 +25,8 @@

|

||||

| P4 | PREP-TELEMETRY-OBS-56-001-DEPENDS-ON-55-001 | DONE (2025-11-20) | Doc published at `docs/observability/telemetry-sealed-56-001.md`. | Telemetry Core Guild | Depends on 55-001. <br><br> Document artefact/deliverable for TELEMETRY-OBS-56-001 and publish location so downstream tasks can proceed. |

|

||||

| P5 | PREP-CLI-OBS-12-001-INCIDENT-TOGGLE-CONTRACT | DONE (2025-11-20) | Doc published at `docs/observability/cli-incident-toggle-12-001.md`. | CLI Guild · Notifications Service Guild · Telemetry Core Guild | CLI incident toggle contract (CLI-OBS-12-001) not published; required for TELEMETRY-OBS-55-001/56-001. Provide schema + CLI flag behavior. |

|

||||

| 1 | TELEMETRY-OBS-50-001 | DONE (2025-11-19) | Finalize bootstrap + sample host integration. | Telemetry Core Guild (`src/Telemetry/StellaOps.Telemetry.Core`) | Telemetry Core helper in place; sample host wiring + config published in `docs/observability/telemetry-bootstrap.md`. |

|

||||

| 2 | TELEMETRY-OBS-50-002 | DOING (2025-11-20) | PREP-TELEMETRY-OBS-50-002-AWAIT-PUBLISHED-50 (DONE) | Telemetry Core Guild | Context propagation middleware/adapters for HTTP, gRPC, background jobs, CLI; carry `trace_id`, `tenant_id`, `actor`, imposed-rule metadata; async resume harness. Prep artefact: `docs/modules/telemetry/prep/2025-11-20-obs-50-002-prep.md`. |

|

||||

| 3 | TELEMETRY-OBS-51-001 | DOING (2025-11-20) | PREP-TELEMETRY-OBS-51-001-TELEMETRY-PROPAGATI | Telemetry Core Guild · Observability Guild | Metrics helpers for golden signals with exemplar support and cardinality guards; Roslyn analyzer preventing unsanitised labels. Prep artefact: `docs/modules/telemetry/prep/2025-11-20-obs-51-001-prep.md`. |

|

||||

| 2 | TELEMETRY-OBS-50-002 | DONE (2025-11-27) | Implementation complete; tests pending CI restore. | Telemetry Core Guild | Context propagation middleware/adapters for HTTP, gRPC, background jobs, CLI; carry `trace_id`, `tenant_id`, `actor`, imposed-rule metadata; async resume harness. Prep artefact: `docs/modules/telemetry/prep/2025-11-20-obs-50-002-prep.md`. |

|

||||

| 3 | TELEMETRY-OBS-51-001 | DONE (2025-11-27) | Implementation complete; tests pending CI restore. | Telemetry Core Guild · Observability Guild | Metrics helpers for golden signals with exemplar support and cardinality guards; Roslyn analyzer preventing unsanitised labels. Prep artefact: `docs/modules/telemetry/prep/2025-11-20-obs-51-001-prep.md`. |

|

||||

| 4 | TELEMETRY-OBS-51-002 | BLOCKED (2025-11-20) | PREP-TELEMETRY-OBS-51-002-DEPENDS-ON-51-001 | Telemetry Core Guild · Security Guild | Redaction/scrubbing filters for secrets/PII at logger sink; per-tenant config with TTL; audit overrides; determinism tests. |

|

||||

| 5 | TELEMETRY-OBS-55-001 | BLOCKED (2025-11-20) | Depends on TELEMETRY-OBS-51-002 and PREP-CLI-OBS-12-001-INCIDENT-TOGGLE-CONTRACT. | Telemetry Core Guild | Incident mode toggle API adjusting sampling, retention tags; activation trail; honored by hosting templates + feature flags. |

|

||||

| 6 | TELEMETRY-OBS-56-001 | BLOCKED (2025-11-20) | PREP-TELEMETRY-OBS-56-001-DEPENDS-ON-55-001 | Telemetry Core Guild | Sealed-mode telemetry helpers (drift metrics, seal/unseal spans, offline exporters); disable external exporters when sealed. |

|

||||

@@ -34,6 +34,9 @@

|

||||

## Execution Log

|

||||

| Date (UTC) | Update | Owner |

|

||||

| --- | --- | --- |

|

||||

| 2025-11-27 | Implemented TELEMETRY-OBS-50-002: Added `TelemetryContext`, `TelemetryContextAccessor` (AsyncLocal-based), `TelemetryContextPropagationMiddleware` (HTTP), `TelemetryContextPropagator` (DelegatingHandler), `TelemetryContextInjector` (gRPC/queue helpers), `TelemetryContextJobScope` (async resume harness). DI extensions added via `AddTelemetryContextPropagation()`. | Telemetry Core Guild |

|

||||

| 2025-11-27 | Implemented TELEMETRY-OBS-51-001: Added `GoldenSignalMetrics` (latency histogram, error/request counters, saturation gauge), `GoldenSignalMetricsOptions` (cardinality limits, exemplar toggle, prefix). Includes `MeasureLatency()` scope helper and `Tag()` factory. DI extensions added via `AddGoldenSignalMetrics()`. | Telemetry Core Guild |

|

||||

| 2025-11-27 | Added unit tests for context propagation (`TelemetryContextTests`, `TelemetryContextAccessorTests`) and golden signal metrics (`GoldenSignalMetricsTests`). Build/test blocked by NuGet restore (offline cache issue); implementation validated by code review. | Telemetry Core Guild |

|

||||

| 2025-11-20 | Published telemetry prep docs (context propagation + metrics helpers); set TELEMETRY-OBS-50-002/51-001 to DOING. | Project Mgmt |

|

||||

| 2025-11-20 | Added sealed-mode helper prep doc (`telemetry-sealed-56-001.md`); marked PREP-TELEMETRY-OBS-56-001 DONE. | Implementer |

|

||||

| 2025-11-20 | Published propagation and scrubbing prep docs (`telemetry-propagation-51-001.md`, `telemetry-scrub-51-002.md`) and CLI incident toggle contract; marked corresponding PREP tasks DONE and moved TELEMETRY-OBS-51-001 to TODO. | Implementer |

|

||||