up

This commit is contained in:

@@ -1,944 +0,0 @@

|

||||

Here’s a clean, action‑ready blueprint for a **public reachability benchmark** you can stand up quickly and grow over time.

|

||||

|

||||

# Why this matters (quick)

|

||||

|

||||

“Reachability” asks: *is a flagged vulnerability actually executable from real entry points in this codebase/container?* A public, reproducible benchmark lets you compare tools apples‑to‑apples, drive research, and keep vendors honest.

|

||||

|

||||

# What to collect (dataset design)

|

||||

|

||||

* **Projects & languages**

|

||||

|

||||

* Polyglot mix: **C/C++ (ELF/PE/Mach‑O)**, **Java/Kotlin**, **C#/.NET**, **Python**, **JavaScript/TypeScript**, **PHP**, **Go**, **Rust**.

|

||||

* For each project: small (≤5k LOC), medium (5–100k), large (100k+).

|

||||

* **Ground‑truth artifacts**

|

||||

|

||||

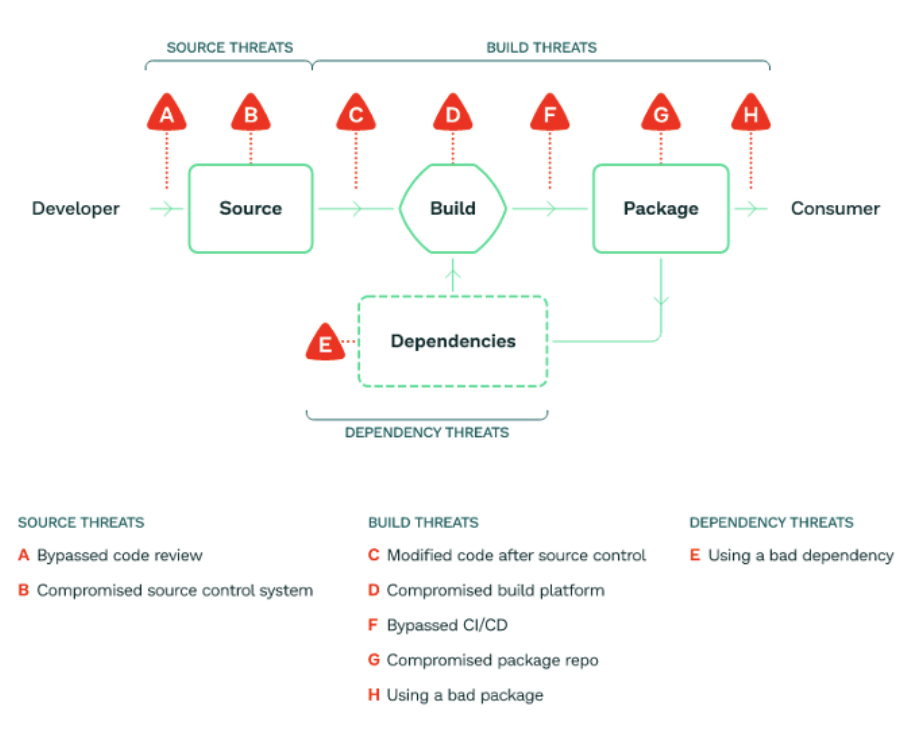

* **Seed CVEs** with known sinks (e.g., deserializers, command exec, SS RF) and **neutral projects** with *no* reachable path (negatives).

|

||||

* **Exploit oracles**: minimal PoCs or unit tests that (1) reach the sink and (2) toggle reachability via feature flags.

|

||||

* **Build outputs (deterministic)**

|

||||

|

||||

* **Reproducible binaries/bytecode** (strip timestamps; fixed seeds; SOURCE_DATE_EPOCH).

|

||||

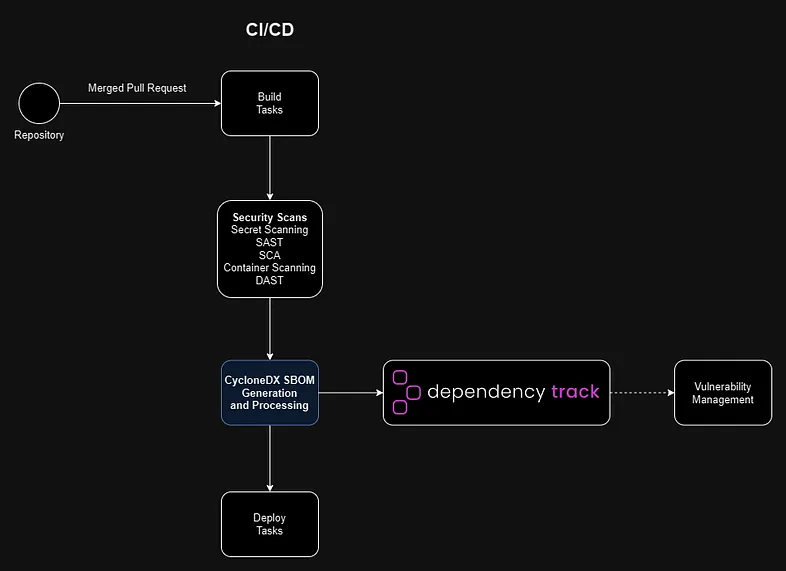

* **SBOM** (CycloneDX/SPDX) + **PURLs** + **Build‑ID** (ELF .note.gnu.build‑id / PE Authentihash / Mach‑O UUID).

|

||||

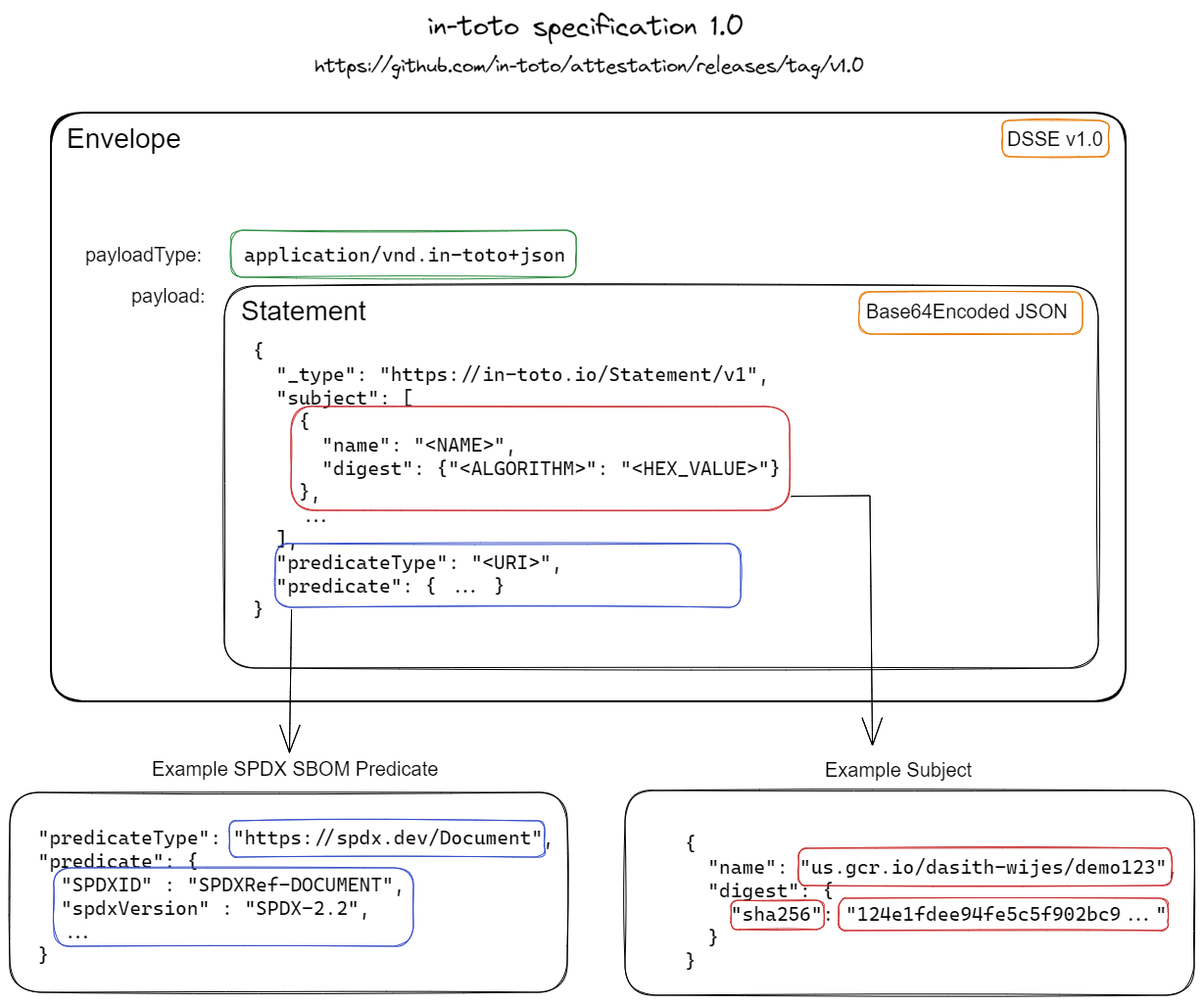

* **Attestations**: in‑toto/DSSE envelopes recording toolchain versions, flags, hashes.

|

||||

* **Execution traces (for truth)**

|

||||

|

||||

* **CI traces**: call‑graph dumps from compilers/analyzers; unit‑test coverage; optional **dynamic traces** (eBPF/.NET ETW/Java Flight Recorder).

|

||||

* **Entry‑point manifests**: HTTP routes, CLI commands, cron/queue consumers.

|

||||

* **Metadata**

|

||||

|

||||

* Language, framework, package manager, compiler versions, OS/container image, optimization level, stripping info, license.

|

||||

|

||||

# How to label ground truth

|

||||

|

||||

* **Per‑vuln case**: `(component, version, sink_id)` with label **reachable / unreachable / unknown**.

|

||||

* **Evidence bundle**: pointer to (a) static call path, (b) dynamic hit (trace/coverage), or (c) rationale for negative.

|

||||

* **Confidence**: high (static+dynamic agree), medium (one source), low (heuristic only).

|

||||

|

||||

# Scoring (simple + fair)

|

||||

|

||||

* **Binary classification** on cases:

|

||||

|

||||

* Precision, Recall, F1. Report **AU‑PR** if you output probabilities.

|

||||

* **Path quality**

|

||||

|

||||

* **Explainability score (0–3)**:

|

||||

|

||||

* 0: “vuln reachable” w/o context

|

||||

* 1: names only (entry→…→sink)

|

||||

* 2: full interprocedural path w/ locations

|

||||

* 3: plus **inputs/guards** (taint/constraints, env flags)

|

||||

* **Runtime cost**

|

||||

|

||||

* Wall‑clock, peak RAM, image size; normalized by KLOC.

|

||||

* **Determinism**

|

||||

|

||||

* Re‑run variance (≤1% is “A”, 1–5% “B”, >5% “C”).

|

||||

|

||||

# Avoiding overfitting

|

||||

|

||||

* **Train/Dev/Test** splits per language; **hidden test** projects rotated quarterly.

|

||||

* **Case churn**: introduce **isomorphic variants** (rename symbols, reorder files) to punish memorization.

|

||||

* **Poisoned controls**: include decoy sinks and unreachable dead‑code traps.

|

||||

* **Submission rules**: require **attestations** of tool versions & flags; limit per‑case hints.

|

||||

|

||||

# Reference baselines (to run out‑of‑the‑box)

|

||||

|

||||

* **Snyk Code/Reachability** (JS/Java/Python, SaaS/CLI).

|

||||

* **Semgrep + Pro Engine** (rules + reachability mode).

|

||||

* **CodeQL** (multi‑lang, LGTM‑style queries).

|

||||

* **Joern** (C/C++/JVM code property graphs).

|

||||

* **angr** (binary symbolic exec; selective for native samples).

|

||||

* **Language‑specific**: pip‑audit w/ import graphs, npm with lock‑tree + route discovery, Maven + call‑graph (Soot/WALA).

|

||||

|

||||

# Submission format (one JSON per tool run)

|

||||

|

||||

```json

|

||||

{

|

||||

"tool": {"name": "YourTool", "version": "1.2.3"},

|

||||

"run": {

|

||||

"commit": "…",

|

||||

"platform": "ubuntu:24.04",

|

||||

"time_s": 182.4, "peak_mb": 3072

|

||||

},

|

||||

"cases": [

|

||||

{

|

||||

"id": "php-shop:fastjson@1.2.68:Sink#deserialize",

|

||||

"prediction": "reachable",

|

||||

"confidence": 0.88,

|

||||

"explain": {

|

||||

"entry": "POST /api/orders",

|

||||

"path": [

|

||||

"OrdersController::create",

|

||||

"Serializer::deserialize",

|

||||

"Fastjson::parseObject"

|

||||

],

|

||||

"guards": ["feature.flag.json_enabled==true"]

|

||||

}

|

||||

}

|

||||

],

|

||||

"artifacts": {

|

||||

"sbom": "sha256:…", "attestation": "sha256:…"

|

||||

}

|

||||

}

|

||||

```

|

||||

|

||||

# Folder layout (repo)

|

||||

|

||||

```

|

||||

/benchmark

|

||||

/cases/<lang>/<project>/<case_id>/

|

||||

case.yaml # component@version, sink, labels, evidence refs

|

||||

entrypoints.yaml # routes/CLIs/cron

|

||||

build/ # Dockerfiles, lockfiles, pinned toolchains

|

||||

outputs/ # SBOMs, binaries, traces (checksummed)

|

||||

/splits/{train,dev,test}.txt

|

||||

/schemas/{case.json,submission.json}

|

||||

/scripts/{build.sh, run_tests.sh, score.py}

|

||||

/docs/ (how-to, FAQs, T&Cs)

|

||||

```

|

||||

|

||||

# Minimal **v1** (4–6 weeks of work)

|

||||

|

||||

1. **Languages**: JS/TS, Python, Java, C (ELF).

|

||||

2. **20–30 cases**: mix of reachable/unreachable with PoC unit tests.

|

||||

3. **Deterministic builds** in containers; publish SBOM+attestations.

|

||||

4. **Scorer**: precision/recall/F1 + explainability, runtime, determinism.

|

||||

5. **Baselines**: run CodeQL + Semgrep across all; Snyk where feasible; angr for 3 native cases.

|

||||

6. **Website**: static leaderboard (per‑lang, per‑size), download links, submission guide.

|

||||

|

||||

# V2+ (quarterly)

|

||||

|

||||

* Add **.NET, PHP, Go, Rust**; broaden binary focus (PE/Mach‑O).

|

||||

* Add **dynamic traces** (eBPF/ETW/JFR) and **taint oracles**.

|

||||

* Introduce **config‑gated reachability** (feature flags, env, k8s secrets).

|

||||

* Add **dataset cards** per case (threat model, CWE, false‑positive traps).

|

||||

|

||||

# Publishing & governance

|

||||

|

||||

* License: **CC‑BY‑SA** for metadata, **source‑compatible OSS** for code, binaries under original licenses.

|

||||

* **Repro packs**: `benchmark-kit.tgz` with container recipes, hashes, and attestations.

|

||||

* **Disclosure**: CVE hygiene, responsible use, opt‑out path for upstreams.

|

||||

* **Stewards**: small TAC (you + two external reviewers) to approve new cases and adjudicate disputes.

|

||||

|

||||

# Immediate next steps (checklist)

|

||||

|

||||

* Lock the **schemas** (case + submission + attestation fields).

|

||||

* Pick 8 seed projects (2 per language tiered by size).

|

||||

* Draft 12 sink‑cases (6 reachable, 6 unreachable) with unit‑test oracles.

|

||||

* Script deterministic builds and **hash‑locked SBOMs**.

|

||||

* Implement the scorer; publish a **starter leaderboard** with 2 baselines.

|

||||

* Ship **v1 website/docs** and open submissions.

|

||||

|

||||

If you want, I can generate the repo scaffold (folders, YAML/JSON schemas, Dockerfiles, scorer script) so your team can `git clone` and start adding cases immediately.

|

||||

Cool, let’s turn the blueprint into a concrete, developer‑friendly implementation plan.

|

||||

|

||||

I’ll assume **v1 scope** is:

|

||||

|

||||

* Languages: **JavaScript/TypeScript (Node)**, **Python**, **Java**, **C (ELF)**

|

||||

* ~**20–30 cases** total (reachable/unreachable mix)

|

||||

* Baselines: **CodeQL**, **Semgrep**, maybe **Snyk** where licenses allow, and **angr** for a few native cases

|

||||

|

||||

You can expand later, but this plan is enough to get v1 shipped.

|

||||

|

||||

---

|

||||

|

||||

## 0. Overall project structure & ownership

|

||||

|

||||

**Owners**

|

||||

|

||||

* **Tech Lead** – owns architecture & final decisions

|

||||

* **Benchmark Core** – 2–3 devs building schemas, scorer, infra

|

||||

* **Language Tracks** – 1 dev per language (JS, Python, Java, C)

|

||||

* **Website/Docs** – 1 dev

|

||||

|

||||

**Repo layout (target)**

|

||||

|

||||

```text

|

||||

reachability-benchmark/

|

||||

README.md

|

||||

LICENSE

|

||||

CONTRIBUTING.md

|

||||

CODE_OF_CONDUCT.md

|

||||

|

||||

benchmark/

|

||||

cases/

|

||||

js/

|

||||

express-blog/

|

||||

case-001/

|

||||

case.yaml

|

||||

entrypoints.yaml

|

||||

build/

|

||||

Dockerfile

|

||||

build.sh

|

||||

src/ # project source (or submodule)

|

||||

tests/ # unit tests as oracles

|

||||

outputs/

|

||||

sbom.cdx.json

|

||||

binary.tar.gz

|

||||

coverage.json

|

||||

traces/ # optional dynamic traces

|

||||

py/

|

||||

flask-api/...

|

||||

java/

|

||||

spring-app/...

|

||||

c/

|

||||

httpd-like/...

|

||||

schemas/

|

||||

case.schema.yaml

|

||||

entrypoints.schema.yaml

|

||||

truth.schema.yaml

|

||||

submission.schema.json

|

||||

tools/

|

||||

scorer/

|

||||

rb_score/

|

||||

__init__.py

|

||||

cli.py

|

||||

metrics.py

|

||||

loader.py

|

||||

explainability.py

|

||||

pyproject.toml

|

||||

tests/

|

||||

build/

|

||||

build_all.py

|

||||

validate_builds.py

|

||||

|

||||

baselines/

|

||||

codeql/

|

||||

run_case.sh

|

||||

config/

|

||||

semgrep/

|

||||

run_case.sh

|

||||

rules/

|

||||

snyk/

|

||||

run_case.sh

|

||||

angr/

|

||||

run_case.sh

|

||||

|

||||

ci/

|

||||

github/

|

||||

benchmark.yml

|

||||

|

||||

website/

|

||||

# static site / leaderboard

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

## 1. Phase 1 – Repo & infra setup

|

||||

|

||||

### Task 1.1 – Create repository

|

||||

|

||||

**Developer:** Tech Lead

|

||||

**Deliverables:**

|

||||

|

||||

* Repo created (`reachability-benchmark` or similar)

|

||||

* `LICENSE` (e.g., Apache-2.0 or MIT)

|

||||

* Basic `README.md` describing:

|

||||

|

||||

* Purpose (public reachability benchmark)

|

||||

* High‑level design

|

||||

* v1 scope (langs, #cases)

|

||||

|

||||

### Task 1.2 – Bootstrap structure

|

||||

|

||||

**Developer:** Benchmark Core

|

||||

|

||||

Create directory skeleton as above (without filling everything yet).

|

||||

|

||||

Add:

|

||||

|

||||

```bash

|

||||

# benchmark/Makefile

|

||||

.PHONY: test lint build

|

||||

test:

|

||||

\tpytest benchmark/tools/scorer/tests

|

||||

|

||||

lint:

|

||||

\tblack benchmark/tools/scorer

|

||||

\tflake8 benchmark/tools/scorer

|

||||

|

||||

build:

|

||||

\tpython benchmark/tools/build/build_all.py

|

||||

```

|

||||

|

||||

### Task 1.3 – Coding standards & tooling

|

||||

|

||||

**Developer:** Benchmark Core

|

||||

|

||||

* Add `.editorconfig`, `.gitignore`, and Python tool configs (`ruff`, `black`, or `flake8`).

|

||||

* Define minimal **PR checklist** in `CONTRIBUTING.md`:

|

||||

|

||||

* Tests pass

|

||||

* Lint passes

|

||||

* New schemas have JSON schema or YAML schema and tests

|

||||

* New cases come with oracles (tests/coverage)

|

||||

|

||||

---

|

||||

|

||||

## 2. Phase 2 – Case & submission schemas

|

||||

|

||||

### Task 2.1 – Define case metadata format

|

||||

|

||||

**Developer:** Benchmark Core

|

||||

|

||||

Create `benchmark/schemas/case.schema.yaml` and an example `case.yaml`.

|

||||

|

||||

**Example `case.yaml`**

|

||||

|

||||

```yaml

|

||||

id: "js-express-blog:001"

|

||||

language: "javascript"

|

||||

framework: "express"

|

||||

size: "small" # small | medium | large

|

||||

component:

|

||||

name: "express-blog"

|

||||

version: "1.0.0-bench"

|

||||

vulnerability:

|

||||

cve: "CVE-XXXX-YYYY"

|

||||

cwe: "CWE-502"

|

||||

description: "Unsafe deserialization via user-controlled JSON."

|

||||

sink_id: "Deserializer::parse"

|

||||

ground_truth:

|

||||

label: "reachable" # reachable | unreachable | unknown

|

||||

confidence: "high" # high | medium | low

|

||||

evidence_files:

|

||||

- "truth.yaml"

|

||||

notes: >

|

||||

Unit test test_reachable_deserialization triggers the sink.

|

||||

build:

|

||||

dockerfile: "build/Dockerfile"

|

||||

build_script: "build/build.sh"

|

||||

output:

|

||||

artifact_path: "outputs/binary.tar.gz"

|

||||

sbom_path: "outputs/sbom.cdx.json"

|

||||

coverage_path: "outputs/coverage.json"

|

||||

traces_dir: "outputs/traces"

|

||||

environment:

|

||||

os_image: "ubuntu:24.04"

|

||||

compiler: null

|

||||

runtime:

|

||||

node: "20.11.0"

|

||||

source_date_epoch: 1730000000

|

||||

```

|

||||

|

||||

**Acceptance criteria**

|

||||

|

||||

* Schema validates sample `case.yaml` with a Python script:

|

||||

|

||||

* `benchmark/tools/build/validate_schema.py` using `jsonschema` or `pykwalify`.

|

||||

|

||||

---

|

||||

|

||||

### Task 2.2 – Entry points schema

|

||||

|

||||

**Developer:** Benchmark Core

|

||||

|

||||

`benchmark/schemas/entrypoints.schema.yaml`

|

||||

|

||||

**Example `entrypoints.yaml`**

|

||||

|

||||

```yaml

|

||||

entries:

|

||||

http:

|

||||

- id: "POST /api/posts"

|

||||

route: "/api/posts"

|

||||

method: "POST"

|

||||

handler: "PostsController.create"

|

||||

cli:

|

||||

- id: "generate-report"

|

||||

command: "node cli.js generate-report"

|

||||

description: "Generates summary report."

|

||||

scheduled:

|

||||

- id: "daily-cleanup"

|

||||

schedule: "0 3 * * *"

|

||||

handler: "CleanupJob.run"

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

### Task 2.3 – Ground truth / truth schema

|

||||

|

||||

**Developer:** Benchmark Core + Language Tracks

|

||||

|

||||

`benchmark/schemas/truth.schema.yaml`

|

||||

|

||||

**Example `truth.yaml`**

|

||||

|

||||

```yaml

|

||||

id: "js-express-blog:001"

|

||||

cases:

|

||||

- sink_id: "Deserializer::parse"

|

||||

label: "reachable"

|

||||

dynamic_evidence:

|

||||

covered_by_tests:

|

||||

- "tests/test_reachable_deserialization.js::should_reach_sink"

|

||||

coverage_files:

|

||||

- "outputs/coverage.json"

|

||||

static_evidence:

|

||||

call_path:

|

||||

- "POST /api/posts"

|

||||

- "PostsController.create"

|

||||

- "PostsService.createFromJson"

|

||||

- "Deserializer.parse"

|

||||

config_conditions:

|

||||

- "process.env.FEATURE_JSON_ENABLED == 'true'"

|

||||

notes: "If FEATURE_JSON_ENABLED=false, path is unreachable."

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

### Task 2.4 – Submission schema

|

||||

|

||||

**Developer:** Benchmark Core

|

||||

|

||||

`benchmark/schemas/submission.schema.json`

|

||||

|

||||

**Shape**

|

||||

|

||||

```json

|

||||

{

|

||||

"tool": { "name": "YourTool", "version": "1.2.3" },

|

||||

"run": {

|

||||

"commit": "abcd1234",

|

||||

"platform": "ubuntu:24.04",

|

||||

"time_s": 182.4,

|

||||

"peak_mb": 3072

|

||||

},

|

||||

"cases": [

|

||||

{

|

||||

"id": "js-express-blog:001",

|

||||

"prediction": "reachable",

|

||||

"confidence": 0.88,

|

||||

"explain": {

|

||||

"entry": "POST /api/posts",

|

||||

"path": [

|

||||

"PostsController.create",

|

||||

"PostsService.createFromJson",

|

||||

"Deserializer.parse"

|

||||

],

|

||||

"guards": [

|

||||

"process.env.FEATURE_JSON_ENABLED === 'true'"

|

||||

]

|

||||

}

|

||||

}

|

||||

],

|

||||

"artifacts": {

|

||||

"sbom": "sha256:...",

|

||||

"attestation": "sha256:..."

|

||||

}

|

||||

}

|

||||

```

|

||||

|

||||

Write Python validation utility:

|

||||

|

||||

```bash

|

||||

python benchmark/tools/scorer/validate_submission.py submission.json

|

||||

```

|

||||

|

||||

**Acceptance criteria**

|

||||

|

||||

* Validation fails on missing fields / wrong enum values.

|

||||

* At least two sample submissions pass validation (e.g., “perfect” and “random baseline”).

|

||||

|

||||

---

|

||||

|

||||

## 3. Phase 3 – Reference projects & deterministic builds

|

||||

|

||||

### Task 3.1 – Select and vendor v1 projects

|

||||

|

||||

**Developer:** Tech Lead + Language Tracks

|

||||

|

||||

For each language, choose:

|

||||

|

||||

* 1 small toy app (simple web or CLI)

|

||||

* 1 medium app (more routes, multiple modules)

|

||||

* Optional: 1 large (for performance stress tests)

|

||||

|

||||

Add them under `benchmark/cases/<lang>/<project>/src/`

|

||||

(or as git submodules if you want to track upstream).

|

||||

|

||||

---

|

||||

|

||||

### Task 3.2 – Deterministic Docker build per project

|

||||

|

||||

**Developer:** Language Tracks

|

||||

|

||||

For each project:

|

||||

|

||||

* Create `build/Dockerfile`

|

||||

* Create `build/build.sh` that:

|

||||

|

||||

* Builds the app

|

||||

* Produces artifacts

|

||||

* Generates SBOM and attestation

|

||||

|

||||

**Example `build/Dockerfile` (Node)**

|

||||

|

||||

```dockerfile

|

||||

FROM node:20.11-slim

|

||||

|

||||

ENV NODE_ENV=production

|

||||

ENV SOURCE_DATE_EPOCH=1730000000

|

||||

|

||||

WORKDIR /app

|

||||

COPY src/ /app

|

||||

COPY package.json package-lock.json /app/

|

||||

|

||||

RUN npm ci --ignore-scripts && \

|

||||

npm run build || true

|

||||

|

||||

CMD ["node", "server.js"]

|

||||

```

|

||||

|

||||

**Example `build.sh`**

|

||||

|

||||

```bash

|

||||

#!/usr/bin/env bash

|

||||

set -euo pipefail

|

||||

|

||||

ROOT_DIR="$(dirname "$(readlink -f "$0")")/.."

|

||||

OUT_DIR="$ROOT_DIR/outputs"

|

||||

mkdir -p "$OUT_DIR"

|

||||

|

||||

IMAGE_TAG="rb-js-express-blog:1"

|

||||

|

||||

docker build -t "$IMAGE_TAG" "$ROOT_DIR/build"

|

||||

|

||||

# Export image as tarball (binary artifact)

|

||||

docker save "$IMAGE_TAG" | gzip > "$OUT_DIR/binary.tar.gz"

|

||||

|

||||

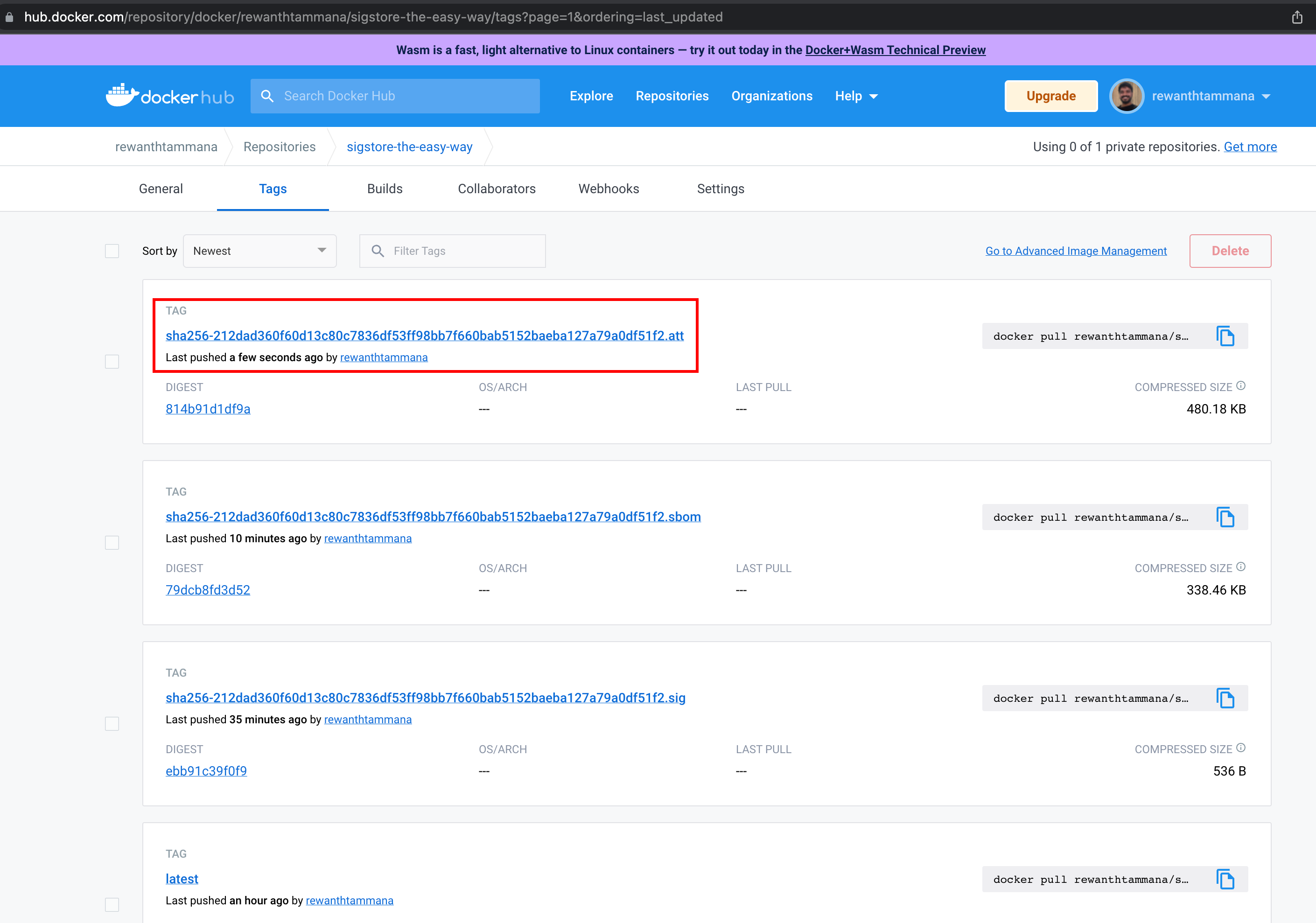

# Generate SBOM (e.g. via syft) – can be optional stub for v1

|

||||

syft packages "docker:$IMAGE_TAG" -o cyclonedx-json > "$OUT_DIR/sbom.cdx.json"

|

||||

|

||||

# In future: generate in-toto attestations

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

### Task 3.3 – Determinism checker

|

||||

|

||||

**Developer:** Benchmark Core

|

||||

|

||||

`benchmark/tools/build/validate_builds.py`:

|

||||

|

||||

* For each case:

|

||||

|

||||

* Run `build.sh` twice

|

||||

* Compare hashes of `outputs/binary.tar.gz` and `outputs/sbom.cdx.json`

|

||||

* Fail if hashes differ.

|

||||

|

||||

**Acceptance criteria**

|

||||

|

||||

* All v1 cases produce identical artifacts across two builds on CI.

|

||||

|

||||

---

|

||||

|

||||

## 4. Phase 4 – Ground truth oracles (tests & traces)

|

||||

|

||||

### Task 4.1 – Add unit/integration tests for reachable cases

|

||||

|

||||

**Developer:** Language Tracks

|

||||

|

||||

For each **reachable** case:

|

||||

|

||||

* Add `tests/` under the project to:

|

||||

|

||||

* Start the app (if necessary)

|

||||

* Send a request/trigger that reaches the vulnerable sink

|

||||

* Assert that a sentinel side effect occurs (e.g. log or marker file) instead of real exploitation.

|

||||

|

||||

Example for Node using Jest:

|

||||

|

||||

```js

|

||||

test("should reach deserialization sink", async () => {

|

||||

const res = await request(app)

|

||||

.post("/api/posts")

|

||||

.send({ title: "x", body: '{"__proto__":{}}' });

|

||||

|

||||

expect(res.statusCode).toBe(200);

|

||||

// Sink logs "REACH_SINK" – we check log or variable

|

||||

expect(sinkWasReached()).toBe(true);

|

||||

});

|

||||

```

|

||||

|

||||

### Task 4.2 – Instrument coverage

|

||||

|

||||

**Developer:** Language Tracks

|

||||

|

||||

* For each language, pick a coverage tool:

|

||||

|

||||

* JS: `nyc` + `istanbul`

|

||||

* Python: `coverage.py`

|

||||

* Java: `jacoco`

|

||||

* C: `gcov`/`llvm-cov` (optional for v1)

|

||||

|

||||

* Ensure running tests produces `outputs/coverage.json` or `.xml` that we then convert to a simple JSON format:

|

||||

|

||||

```json

|

||||

{

|

||||

"files": {

|

||||

"src/controllers/posts.js": {

|

||||

"lines_covered": [12, 13, 14, 27],

|

||||

"lines_total": 40

|

||||

}

|

||||

}

|

||||

}

|

||||

```

|

||||

|

||||

Create a small converter script if needed.

|

||||

|

||||

### Task 4.3 – Optional dynamic traces

|

||||

|

||||

If you want richer evidence:

|

||||

|

||||

* JS: add middleware that logs `(entry_id, handler, sink)` triples to `outputs/traces/traces.json`

|

||||

* Python: similar using decorators

|

||||

* C/Java: out of scope for v1 unless you want to invest extra time.

|

||||

|

||||

---

|

||||

|

||||

## 5. Phase 5 – Scoring tool (CLI)

|

||||

|

||||

### Task 5.1 – Implement `rb-score` library + CLI

|

||||

|

||||

**Developer:** Benchmark Core

|

||||

|

||||

Create `benchmark/tools/scorer/rb_score/` with:

|

||||

|

||||

* `loader.py`

|

||||

|

||||

* Load all `case.yaml`, `truth.yaml` into memory.

|

||||

* Provide functions: `load_cases() -> Dict[case_id, Case]`.

|

||||

|

||||

* `metrics.py`

|

||||

|

||||

* Implement:

|

||||

|

||||

* `compute_precision_recall(truth, predictions)`

|

||||

* `compute_path_quality_score(explain_block)` (0–3)

|

||||

* `compute_runtime_stats(run_block)`

|

||||

|

||||

* `cli.py`

|

||||

|

||||

* CLI:

|

||||

|

||||

```bash

|

||||

rb-score \

|

||||

--cases-root benchmark/cases \

|

||||

--submission submissions/mytool.json \

|

||||

--output results/mytool_results.json

|

||||

```

|

||||

|

||||

**Pseudo-code for core scoring**

|

||||

|

||||

```python

|

||||

def score_submission(truth, submission):

|

||||

y_true = []

|

||||

y_pred = []

|

||||

per_case_scores = {}

|

||||

|

||||

for case in truth:

|

||||

gt = truth[case.id].label # reachable/unreachable

|

||||

pred_case = find_pred_case(submission.cases, case.id)

|

||||

pred_label = pred_case.prediction if pred_case else "unreachable"

|

||||

|

||||

y_true.append(gt == "reachable")

|

||||

y_pred.append(pred_label == "reachable")

|

||||

|

||||

explain_score = explainability(pred_case.explain if pred_case else None)

|

||||

|

||||

per_case_scores[case.id] = {

|

||||

"gt": gt,

|

||||

"pred": pred_label,

|

||||

"explainability": explain_score,

|

||||

}

|

||||

|

||||

precision, recall, f1 = compute_prf(y_true, y_pred)

|

||||

|

||||

return {

|

||||

"summary": {

|

||||

"precision": precision,

|

||||

"recall": recall,

|

||||

"f1": f1,

|

||||

"num_cases": len(truth),

|

||||

},

|

||||

"cases": per_case_scores,

|

||||

}

|

||||

```

|

||||

|

||||

### Task 5.2 – Explainability scoring rules

|

||||

|

||||

**Developer:** Benchmark Core

|

||||

|

||||

Implement `explainability(explain)`:

|

||||

|

||||

* 0 – `explain` missing or `path` empty

|

||||

* 1 – `path` present with at least 2 nodes (sink + one function)

|

||||

* 2 – `path` contains:

|

||||

|

||||

* Entry label (HTTP route/CLI id)

|

||||

* ≥3 nodes (entry → … → sink)

|

||||

* 3 – Level 2 plus `guards` list non-empty

|

||||

|

||||

Unit tests for at least 4 scenarios.

|

||||

|

||||

### Task 5.3 – Regression tests for scoring

|

||||

|

||||

Add small test fixture:

|

||||

|

||||

* Tiny synthetic benchmark: 3 cases, 2 reachable, 1 unreachable.

|

||||

* 3 submissions:

|

||||

|

||||

* Perfect

|

||||

* All reachable

|

||||

* All unreachable

|

||||

|

||||

Assertions:

|

||||

|

||||

* Perfect: `precision=1, recall=1`

|

||||

* All reachable: `recall=1, precision<1`

|

||||

* All unreachable: `precision=1 (trivially on negatives), recall=0`

|

||||

|

||||

---

|

||||

|

||||

## 6. Phase 6 – Baseline integrations

|

||||

|

||||

### Task 6.1 – Semgrep baseline

|

||||

|

||||

**Developer:** Benchmark Core (with Semgrep experience)

|

||||

|

||||

* `baselines/semgrep/run_case.sh`:

|

||||

|

||||

* Inputs: `case_id`, `cases_root`, `output_path`

|

||||

* Steps:

|

||||

|

||||

* Find `src/` for case

|

||||

* Run `semgrep --config auto` or curated rules

|

||||

* Convert Semgrep findings into benchmark submission format:

|

||||

|

||||

* Map Semgrep rules → vulnerability types → candidate sinks

|

||||

* Heuristically guess reachability (for v1, maybe always “reachable” if sink in code path)

|

||||

* Output: `output_path` JSON conforming to `submission.schema.json`.

|

||||

|

||||

### Task 6.2 – CodeQL baseline

|

||||

|

||||

* Create CodeQL databases for each project (likely via `codeql database create`).

|

||||

* Create queries targeting known sinks (e.g., `Deserialization`, `CommandInjection`).

|

||||

* `baselines/codeql/run_case.sh`:

|

||||

|

||||

* Build DB (or reuse)

|

||||

* Run queries

|

||||

* Translate results into our submission format (again as heuristic reachability).

|

||||

|

||||

### Task 6.3 – Optional Snyk / angr baselines

|

||||

|

||||

* Snyk:

|

||||

|

||||

* Use `snyk test` on the project

|

||||

* Map results to dependencies & known CVEs

|

||||

* For v1, just mark as `reachable` if Snyk reports a reachable path (if available).

|

||||

* angr:

|

||||

|

||||

* For 1–2 small C samples, configure simple analysis script.

|

||||

|

||||

**Acceptance criteria**

|

||||

|

||||

* For at least 5 cases (across languages), the baselines produce valid submission JSON.

|

||||

* `rb-score` runs and yields metrics without errors.

|

||||

|

||||

---

|

||||

|

||||

## 7. Phase 7 – CI/CD

|

||||

|

||||

### Task 7.1 – GitHub Actions workflow

|

||||

|

||||

**Developer:** Benchmark Core

|

||||

|

||||

`ci/github/benchmark.yml`:

|

||||

|

||||

Jobs:

|

||||

|

||||

1. `lint-and-test`

|

||||

|

||||

* `python -m pip install -e benchmark/tools/scorer[dev]`

|

||||

* `make lint`

|

||||

* `make test`

|

||||

|

||||

2. `build-cases`

|

||||

|

||||

* `python benchmark/tools/build/build_all.py`

|

||||

* Run `validate_builds.py`

|

||||

|

||||

3. `smoke-baselines`

|

||||

|

||||

* For 2–3 cases, run Semgrep/CodeQL wrappers and ensure they emit valid submissions.

|

||||

|

||||

### Task 7.2 – Artifact upload

|

||||

|

||||

* Upload `outputs/` tarball from `build-cases` as workflow artifacts.

|

||||

* Upload `results/*.json` from scoring runs.

|

||||

|

||||

---

|

||||

|

||||

## 8. Phase 8 – Website & leaderboard

|

||||

|

||||

### Task 8.1 – Define results JSON format

|

||||

|

||||

**Developer:** Benchmark Core + Website dev

|

||||

|

||||

`results/leaderboard.json`:

|

||||

|

||||

```json

|

||||

{

|

||||

"tools": [

|

||||

{

|

||||

"name": "Semgrep",

|

||||

"version": "1.60.0",

|

||||

"summary": {

|

||||

"precision": 0.72,

|

||||

"recall": 0.48,

|

||||

"f1": 0.58

|

||||

},

|

||||

"by_language": {

|

||||

"javascript": {"precision": 0.80, "recall": 0.50, "f1": 0.62},

|

||||

"python": {"precision": 0.65, "recall": 0.45, "f1": 0.53}

|

||||

}

|

||||

}

|

||||

]

|

||||

}

|

||||

```

|

||||

|

||||

CLI option to generate this:

|

||||

|

||||

```bash

|

||||

rb-score compare \

|

||||

--cases-root benchmark/cases \

|

||||

--submissions submissions/*.json \

|

||||

--output results/leaderboard.json

|

||||

```

|

||||

|

||||

### Task 8.2 – Static site

|

||||

|

||||

**Developer:** Website dev

|

||||

|

||||

Tech choice: any static framework (Next.js, Astro, Docusaurus, or even pure HTML+JS).

|

||||

|

||||

Pages:

|

||||

|

||||

* **Home**

|

||||

|

||||

* What is reachability?

|

||||

* Summary of benchmark

|

||||

|

||||

* **Leaderboard**

|

||||

|

||||

* Renders `leaderboard.json`

|

||||

* Filters: language, case size

|

||||

|

||||

* **Docs**

|

||||

|

||||

* How to run benchmark locally

|

||||

* How to prepare a submission

|

||||

|

||||

Add a simple script to copy `results/leaderboard.json` into `website/public/` for publishing.

|

||||

|

||||

---

|

||||

|

||||

## 9. Phase 9 – Docs, governance, and contribution flow

|

||||

|

||||

### Task 9.1 – CONTRIBUTING.md

|

||||

|

||||

Include:

|

||||

|

||||

* How to add a new case:

|

||||

|

||||

* Step‑by‑step:

|

||||

|

||||

1. Create project folder under `benchmark/cases/<lang>/<project>/case-XXX/`

|

||||

2. Add `case.yaml`, `entrypoints.yaml`, `truth.yaml`

|

||||

3. Add oracles (tests, coverage)

|

||||

4. Add deterministic `build/` assets

|

||||

5. Run local tooling:

|

||||

|

||||

* `validate_schema.py`

|

||||

* `validate_builds.py --case <id>`

|

||||

* Example PR description template.

|

||||

|

||||

### Task 9.2 – Governance doc

|

||||

|

||||

* Define **Technical Advisory Committee (TAC)** roles:

|

||||

|

||||

* Approve new cases

|

||||

* Approve schema changes

|

||||

* Manage hidden test sets (future phase)

|

||||

|

||||

* Define **release cadence**:

|

||||

|

||||

* v1.0 with public cases

|

||||

* Quarterly updates with new hidden cases.

|

||||

|

||||

---

|

||||

|

||||

## 10. Suggested milestone breakdown (for planning / sprints)

|

||||

|

||||

### Milestone 1 – Foundation (1–2 sprints)

|

||||

|

||||

* Repo scaffolding (Tasks 1.x)

|

||||

* Schemas (Tasks 2.x)

|

||||

* Two tiny toy cases (one JS, one Python) with:

|

||||

|

||||

* `case.yaml`, `entrypoints.yaml`, `truth.yaml`

|

||||

* Deterministic build

|

||||

* Basic unit tests

|

||||

* Minimal `rb-score` with:

|

||||

|

||||

* Case loading

|

||||

* Precision/recall only

|

||||

|

||||

**Exit:** You can run `rb-score` on a dummy submission for 2 cases.

|

||||

|

||||

---

|

||||

|

||||

### Milestone 2 – v1 dataset (2–3 sprints)

|

||||

|

||||

* Add ~20–30 cases across JS, Python, Java, C

|

||||

* Ground truth & coverage for each

|

||||

* Deterministic builds validated

|

||||

* Explainability scoring implemented

|

||||

* Regression tests for `rb-score`

|

||||

|

||||

**Exit:** Full scoring tool stable; dataset repeatably builds on CI.

|

||||

|

||||

---

|

||||

|

||||

### Milestone 3 – Baselines & site (1–2 sprints)

|

||||

|

||||

* Semgrep + CodeQL baselines producing valid submissions

|

||||

* CI running smoke baselines

|

||||

* `leaderboard.json` generator

|

||||

* Static website with public leaderboard and docs

|

||||

|

||||

**Exit:** Public v1 benchmark you can share with external tool authors.

|

||||

|

||||

---

|

||||

|

||||

If you tell me which stack your team prefers for the site (React, plain HTML, SSG, etc.) or which CI you’re on, I can adapt this into concrete config files (e.g., a full GitHub Actions workflow, Next.js scaffold, or exact `pyproject.toml` for `rb-score`).

|

||||

@@ -1,636 +0,0 @@

|

||||

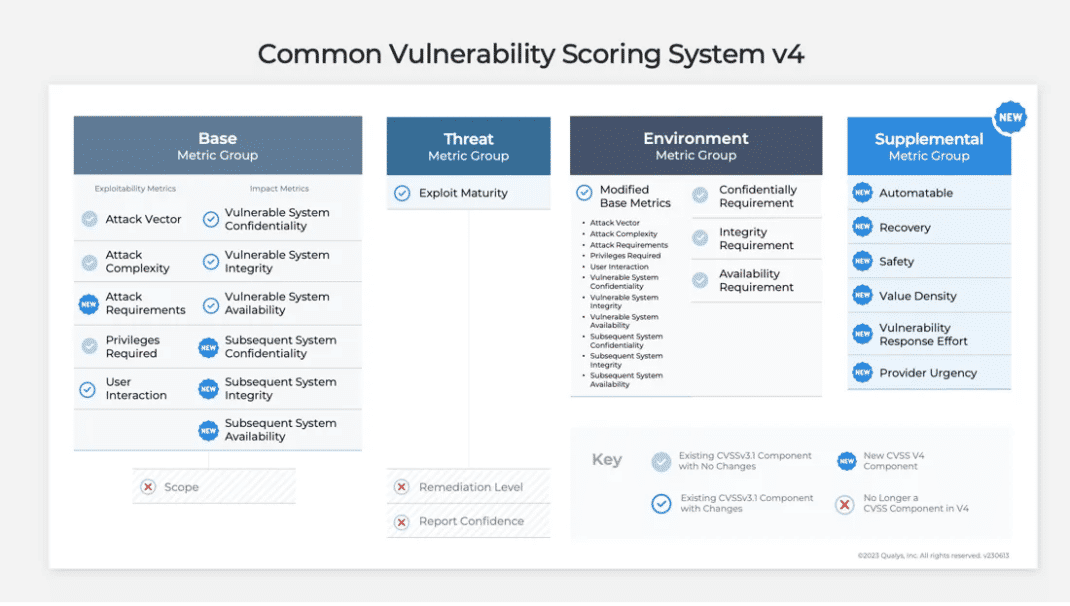

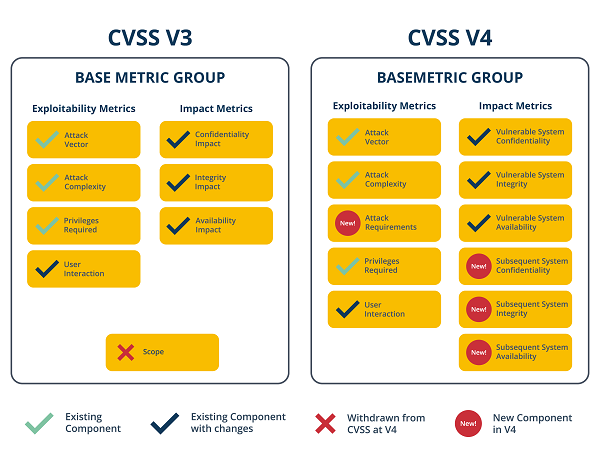

Here’s a compact, one‑screen “CVSS v4.0 Score Receipt” you can drop into Stella Ops so every vulnerability carries its score, evidence, and policy lineage end‑to‑end.

|

||||

|

||||

---

|

||||

|

||||

# CVSS v4.0 Score Receipt (CVSS‑BTE + Supplemental)

|

||||

|

||||

**Vuln ID / Title**

|

||||

**Final CVSS v4.0 Score:** *X.Y* (CVSS‑BTE) • **Vector:** `CVSS:4.0/...`

|

||||

**Why BTE?** CVSS v4.0 is designed to combine Base with default Threat/Environmental first, then amend with real context; Supplemental adds non‑scoring context. ([FIRST][1])

|

||||

|

||||

---

|

||||

|

||||

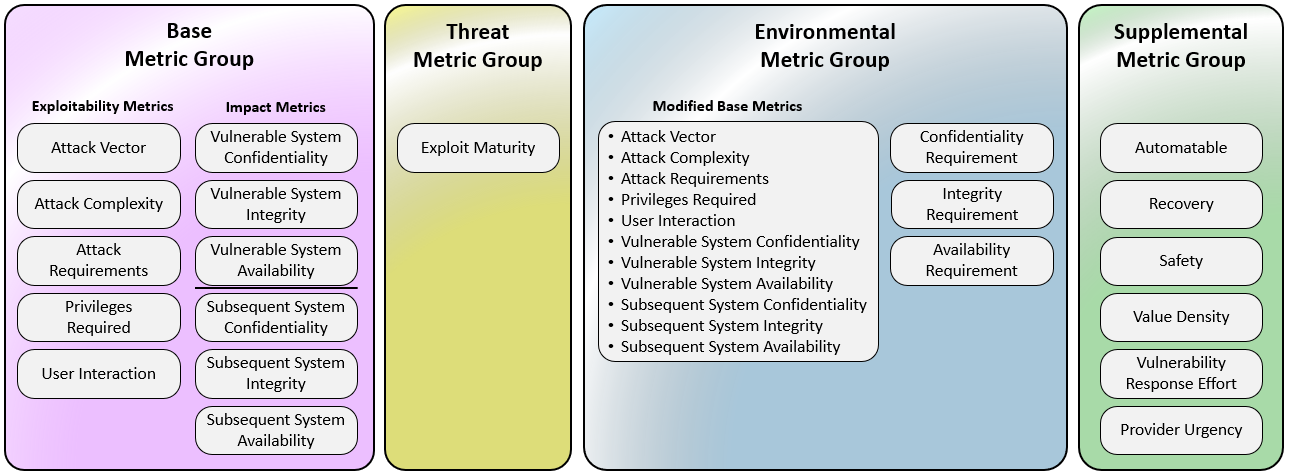

## 1) Base Metrics (intrinsic; vendor/researcher)

|

||||

|

||||

*List each metric with chosen value + short justification + evidence link.*

|

||||

|

||||

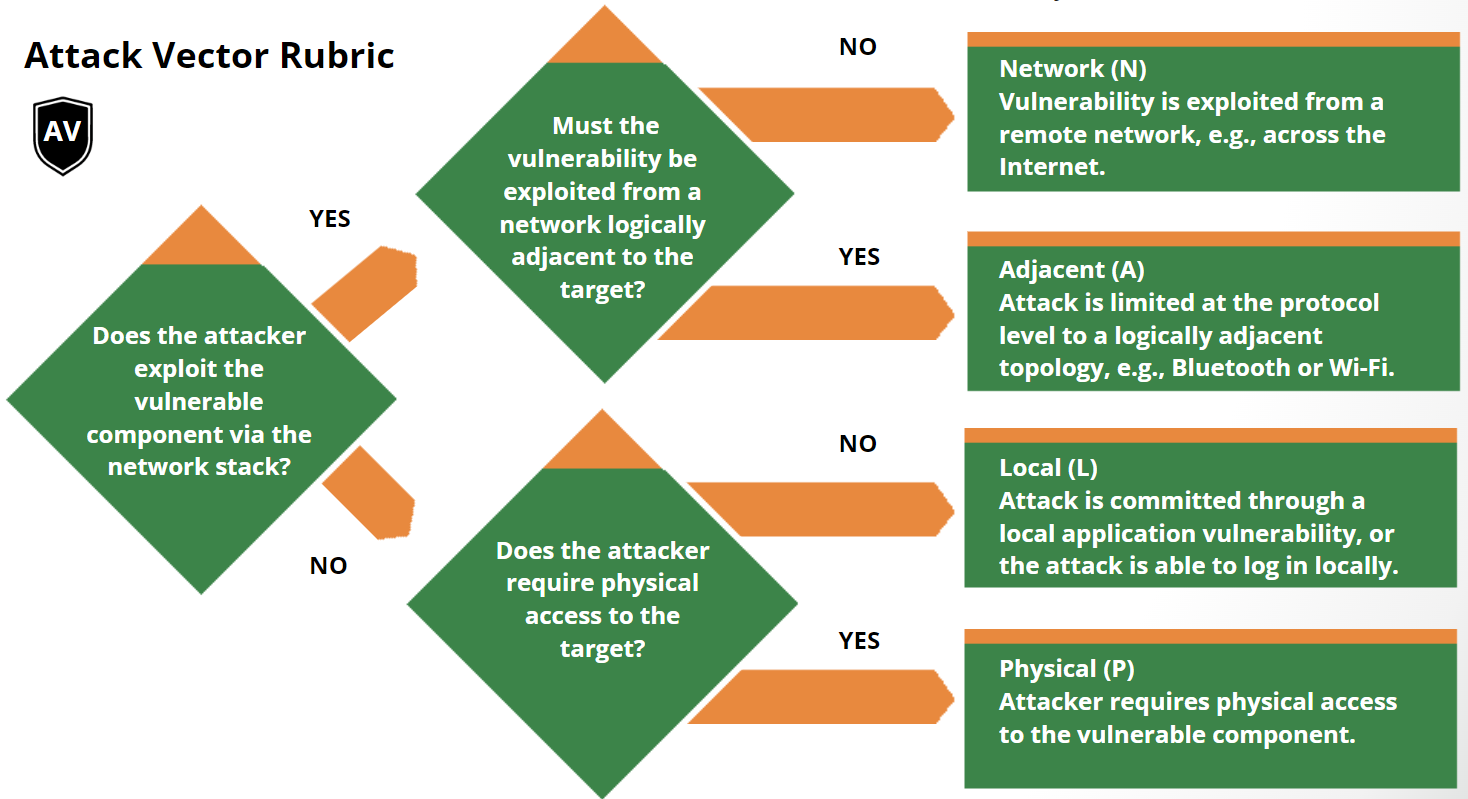

* **Attack Vector (AV):** N | A | I | P — *reason & evidence*

|

||||

* **Attack Complexity (AC):** L | H — *reason & evidence*

|

||||

* **Attack Requirements (AT):** N | P | ? — *reason & evidence*

|

||||

* **Privileges Required (PR):** N | L | H — *reason & evidence*

|

||||

* **User Interaction (UI):** Passive | Active — *reason & evidence*

|

||||

* **Vulnerable System Impact (VC/VI/VA):** H | L | N — *reason & evidence*

|

||||

* **Subsequent System Impact (SC/SI/SA):** H | L | N — *reason & evidence*

|

||||

|

||||

> Notes: v4.0 clarifies Base, splits vulnerable vs. subsequent system impact, and refines UI (Passive/Active). ([FIRST][1])

|

||||

|

||||

---

|

||||

|

||||

## 2) Threat Metrics (time‑varying; consumer)

|

||||

|

||||

* **Exploit Maturity (E):** Attacked | POC | Unreported | NotDefined — *intel & source*

|

||||

* **Automatable (AU):** Yes | No | ND — *tooling/observations*

|

||||

* **Provider Urgency (U):** High | Medium | Low | ND — *advisory/ref*

|

||||

|

||||

> Threat replaces the old Temporal concept and adjusts severity with real‑world exploitation context. ([FIRST][1])

|

||||

|

||||

---

|

||||

|

||||

## 3) Environmental Metrics (your environment)

|

||||

|

||||

* **Security Controls (CR/XR/AR):** Present | Partial | None — *control IDs*

|

||||

* **Criticality (S, H, L, N) of asset/service:** *business tag*

|

||||

* **Safety/Human Impact in your environment:** *if applicable*

|

||||

|

||||

> Environmental tailors the score to your environment (controls, importance). ([FIRST][1])

|

||||

|

||||

---

|

||||

|

||||

## 4) Supplemental (non‑scoring context)

|

||||

|

||||

* **Safety, Recovery, Value‑Density, Vulnerability Response Effort, etc.:** *values + short notes*

|

||||

|

||||

> Supplemental adds context but does not change the numeric score. ([FIRST][1])

|

||||

|

||||

---

|

||||

|

||||

## 5) Evidence Ledger

|

||||

|

||||

* **Artifacts:** logs, PoCs, packet captures, SBOM slices, call‑graphs, config excerpts

|

||||

* **References:** vendor advisory, NVD/First calculator snapshot, exploit write‑ups

|

||||

* **Timestamps & hash of each evidence item** (SHA‑256)

|

||||

|

||||

> Keep a permalink to the FIRST v4.0 calculator or NVD v4 calculator capture for audit. ([FIRST][2])

|

||||

|

||||

---

|

||||

|

||||

## 6) Policy & Determinism

|

||||

|

||||

* **Scoring Policy ID:** `cvss-policy-v4.0-stellaops-YYYYMMDD`

|

||||

* **Policy Hash:** `sha256:…` (of the JSON policy used to map inputs→metrics)

|

||||

* **Scoring Engine Version:** `stellaops.scorer vX.Y.Z`

|

||||

* **Repro Inputs Hash:** DSSE envelope including evidence URIs + CVSS vector

|

||||

|

||||

> Treat the receipt as a deterministic artifact: Base with default T/E, then amended with Threat+Environmental to produce CVSS‑BTE; store policy/evidence hashes for replayable audits. ([FIRST][1])

|

||||

|

||||

---

|

||||

|

||||

## 7) History (amendments over time)

|

||||

|

||||

| Date | Changed | From → To | Reason | Link |

|

||||

| ---------- | -------- | -------------- | ------------------------ | ----------- |

|

||||

| 2025‑11‑25 | Threat:E | POC → Attacked | Active exploitation seen | *intel ref* |

|

||||

|

||||

---

|

||||

|

||||

## Minimal JSON schema (for your UI/API)

|

||||

|

||||

```json

|

||||

{

|

||||

"vulnId": "CVE-YYYY-XXXX",

|

||||

"title": "Short vuln title",

|

||||

"cvss": {

|

||||

"version": "4.0",

|

||||

"vector": "CVSS:4.0/…",

|

||||

"base": { "AV": "N", "AC": "L", "AT": "N", "PR": "N", "UI": "P", "VC": "H", "VI": "H", "VA": "H", "SC": "L", "SI": "N", "SA": "N", "justifications": { /* per-metric text + evidence URIs */ } },

|

||||

"threat": { "E": "Attacked", "AU": "Yes", "U": "High", "evidence": [/* intel links */] },

|

||||

"environmental": { "controls": { "CR": "Present", "XR": "Partial", "AR": "None" }, "criticality": "H", "notes": "…" },

|

||||

"supplemental": { "safety": "High", "recovery": "Hard", "notes": "…" },

|

||||

"finalScore": 9.1,

|

||||

"enumeration": "CVSS-BTE"

|

||||

},

|

||||

"evidence": [{ "name": "exploit_poc.md", "sha256": "…", "uri": "…" }],

|

||||

"policy": { "id": "cvss-policy-v4.0-stellaops-20251125", "sha256": "…", "engine": "stellaops.scorer 1.2.0" },

|

||||

"repro": { "dsseEnvelope": "base64…", "inputsHash": "sha256:…" },

|

||||

"history": [{ "date": "2025-11-25", "change": "Threat:E POC→Attacked", "reason": "SOC report", "ref": "…" }]

|

||||

}

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

## Drop‑in UI wireframe (single screen)

|

||||

|

||||

* **Header bar:** Score badge (X.Y), “CVSS‑BTE”, vector copy button.

|

||||

* **Tabs (or stacked cards):** Base • Threat • Environmental • Supplemental • Evidence • Policy • History.

|

||||

* **Right rail:** “Recalculate with my env” (edits only Threat/Environmental), “Export receipt (JSON/PDF)”, “Open in FIRST/NVD calculator”.

|

||||

|

||||

---

|

||||

|

||||

If you want, I’ll adapt this to your Stella Ops components (DTOs, EF Core models, and a Razor/Blazor card) and wire it to your “deterministic replay” pipeline so every scan emits this receipt alongside the VEX note.

|

||||

|

||||

[1]: https://www.first.org/cvss/v4-0/specification-document?utm_source=chatgpt.com "CVSS v4.0 Specification Document"

|

||||

[2]: https://www.first.org/cvss/calculator/4-0?utm_source=chatgpt.com "Common Vulnerability Scoring System Version 4.0 Calculator"

|

||||

Perfect, let’s turn that receipt idea into a concrete implementation plan your devs can actually build from.

|

||||

|

||||

I’ll break it into phases and responsibilities (backend, frontend, platform/DevOps), with enough detail that someone could start creating tickets from this.

|

||||

|

||||

---

|

||||

|

||||

## 0. Align on Scope & Definitions

|

||||

|

||||

**Goal:** For every vulnerability in Stella Ops, store and display a **CVSS v4.0 CVSS‑BTE score receipt** that is:

|

||||

|

||||

* Deterministic & reproducible (policy + inputs → same score).

|

||||

* Evidenced (links + hashes of artifacts).

|

||||

* Auditable over time (history of amendments).

|

||||

* Friendly to both **vendor/base** and **consumer/threat/env** workflows.

|

||||

|

||||

**Key concepts to lock in with the team (no coding yet):**

|

||||

|

||||

* **Primary object**: `CvssScoreReceipt` attached to a `Vulnerability`.

|

||||

* **Canonical score** = **CVSS‑BTE** (Base + Threat + Environmental).

|

||||

* **Base** usually from vendor/researcher; Threat + Environmental from Stella Ops / customer context.

|

||||

* **Supplemental** metrics: stored but **not part of numeric score**.

|

||||

* **Policy**: machine-readable config (e.g., JSON) that defines how you map questionnaire/inputs → CVSS metrics.

|

||||

|

||||

Deliverable: 2–3 page internal spec summarizing above for devs + PMs.

|

||||

|

||||

---

|

||||

|

||||

## 1. Data Model Design

|

||||

|

||||

### 1.1 Core Entities

|

||||

|

||||

*Model names are illustrative; adapt to your stack.*

|

||||

|

||||

**Vulnerability**

|

||||

|

||||

* `id`

|

||||

* `externalId` (e.g. CVE)

|

||||

* `title`

|

||||

* `description`

|

||||

* `currentCvssReceiptId` (FK → `CvssScoreReceipt`)

|

||||

|

||||

**CvssScoreReceipt**

|

||||

|

||||

* `id`

|

||||

* `vulnerabilityId` (FK)

|

||||

* `version` (e.g. `"4.0"`)

|

||||

* `enumeration` (e.g. `"CVSS-BTE"`)

|

||||

* `vectorString` (full v4.0 vector)

|

||||

* `finalScore` (numeric, 0.0–10.0)

|

||||

* `baseScore` (derived or duplicate for convenience)

|

||||

* `threatScore` (optional interim)

|

||||

* `environmentalScore` (optional interim)

|

||||

* `createdAt`

|

||||

* `createdByUserId`

|

||||

* `policyId` (FK → `CvssPolicy`)

|

||||

* `policyHash` (sha256 of policy JSON)

|

||||

* `inputsHash` (sha256 of normalized scoring inputs)

|

||||

* `dsseEnvelope` (optional text/blob if you implement full DSSE)

|

||||

* `metadata` (JSON for any extras you want)

|

||||

|

||||

**BaseMetrics (v4.0)**

|

||||

|

||||

* `id`, `receiptId` (FK)

|

||||

* `AV`, `AC`, `AT`, `PR`, `UI`

|

||||

* `VC`, `VI`, `VA`, `SC`, `SI`, `SA`

|

||||

* `justifications` (JSON object keyed by metric)

|

||||

|

||||

* e.g. `{ "AV": { "reason": "...", "evidenceIds": ["..."] }, ... }`

|

||||

|

||||

**ThreatMetrics**

|

||||

|

||||

* `id`, `receiptId` (FK)

|

||||

* `E` (Exploit Maturity)

|

||||

* `AU` (Automatable)

|

||||

* `U` (Provider/Consumer Urgency)

|

||||

* `evidence` (JSON: list of intel references)

|

||||

|

||||

**EnvironmentalMetrics**

|

||||

|

||||

* `id`, `receiptId` (FK)

|

||||

* `CR`, `XR`, `AR` (controls)

|

||||

* `criticality` (S/H/L/N or your internal enum)

|

||||

* `notes` (text/JSON)

|

||||

|

||||

**SupplementalMetrics**

|

||||

|

||||

* `id`, `receiptId` (FK)

|

||||

* Fields you care about, e.g.:

|

||||

|

||||

* `safetyImpact`

|

||||

* `recoveryEffort`

|

||||

* `valueDensity`

|

||||

* `vulnerabilityResponseEffort`

|

||||

* `notes`

|

||||

|

||||

**EvidenceItem**

|

||||

|

||||

* `id`

|

||||

* `receiptId` (FK)

|

||||

* `name` (e.g. `"exploit_poc.md"`)

|

||||

* `uri` (link into your blob store, S3, etc.)

|

||||

* `sha256`

|

||||

* `type` (log, pcap, exploit, advisory, config, etc.)

|

||||

* `createdAt`

|

||||

* `createdBy`

|

||||

|

||||

**CvssPolicy**

|

||||

|

||||

* `id` (e.g. `cvss-policy-v4.0-stellaops-20251125`)

|

||||

* `name`

|

||||

* `version`

|

||||

* `engineVersion` (e.g. `stellaops.scorer 1.2.0`)

|

||||

* `policyJson` (JSON)

|

||||

* `sha256` (policy hash)

|

||||

* `active` (bool)

|

||||

* `validFrom`, `validTo` (optional)

|

||||

|

||||

**ReceiptHistoryEntry**

|

||||

|

||||

* `id`

|

||||

* `receiptId` (FK)

|

||||

* `date`

|

||||

* `changedField` (e.g. `"Threat.E"`)

|

||||

* `oldValue`

|

||||

* `newValue`

|

||||

* `reason`

|

||||

* `referenceUri` (link to ticket / intel)

|

||||

* `changedByUserId`

|

||||

|

||||

---

|

||||

|

||||

## 2. Backend Implementation Plan

|

||||

|

||||

### 2.1 Scoring Engine

|

||||

|

||||

**Tasks:**

|

||||

|

||||

1. **Create a `CvssV4Engine` module/package** with:

|

||||

|

||||

* `parseVector(string): CvssVector`

|

||||

* `computeBaseScore(metrics: BaseMetrics): number`

|

||||

* `computeThreatAdjustedScore(base: number, threat: ThreatMetrics): number`

|

||||

* `computeEnvironmentalAdjustedScore(threatAdjusted: number, env: EnvironmentalMetrics): number`

|

||||

* `buildVector(metrics: BaseMetrics & ThreatMetrics & EnvironmentalMetrics): string`

|

||||

2. Implement **CVSS v4.0 math** exactly per spec (rounding rules, minimums, etc.).

|

||||

3. Add **unit tests** for all official sample vectors + your own edge cases.

|

||||

|

||||

**Deliverables:**

|

||||

|

||||

* Test suite `CvssV4EngineTests` with:

|

||||

|

||||

* Known test vectors (from spec or FIRST calculator)

|

||||

* Edge cases: missing threat/env, zero-impact vulnerabilities, etc.

|

||||

|

||||

---

|

||||

|

||||

### 2.2 Receipt Construction Pipeline

|

||||

|

||||

Define a canonical function in backend:

|

||||

|

||||

```pseudo

|

||||

function createReceipt(vulnId, input, policyId, userId):

|

||||

policy = loadPolicy(policyId)

|

||||

normalizedInput = applyPolicy(input, policy) // map UI questionnaire → CVSS metrics

|

||||

|

||||

base = normalizedInput.baseMetrics

|

||||

threat = normalizedInput.threatMetrics

|

||||

env = normalizedInput.environmentalMetrics

|

||||

supplemental = normalizedInput.supplemental

|

||||

|

||||

// Score

|

||||

baseScore = CvssV4Engine.computeBaseScore(base)

|

||||

threatScore = CvssV4Engine.computeThreatAdjustedScore(baseScore, threat)

|

||||

finalScore = CvssV4Engine.computeEnvironmentalAdjustedScore(threatScore, env)

|

||||

|

||||

// Vector

|

||||

vector = CvssV4Engine.buildVector({base, threat, env})

|

||||

|

||||

// Hashes

|

||||

inputsHash = sha256(serializeForHashing({ base, threat, env, supplemental, evidenceRefs: input.evidenceIds }))

|

||||

policyHash = policy.sha256

|

||||

dsseEnvelope = buildDSSEEnvelope({ vulnId, base, threat, env, supplemental, policyId, policyHash, inputsHash })

|

||||

|

||||

// Persist entities in transaction

|

||||

receipt = saveCvssScoreReceipt(...)

|

||||

saveBaseMetrics(receipt.id, base)

|

||||

saveThreatMetrics(receipt.id, threat)

|

||||

saveEnvironmentalMetrics(receipt.id, env)

|

||||

saveSupplementalMetrics(receipt.id, supplemental)

|

||||

linkEvidence(receipt.id, input.evidenceItems)

|

||||

|

||||

updateVulnerabilityCurrentReceipt(vulnId, receipt.id)

|

||||

|

||||

return receipt

|

||||

```

|

||||

|

||||

**Important implementation details:**

|

||||

|

||||

* **`serializeForHashing`**: define a stable ordering and normalization (sorted keys, no whitespace sensitivity, canonical enums) so hashes are truly deterministic.

|

||||

* Use **transactions** so partial writes never leave `Vulnerability` pointing to incomplete receipts.

|

||||

* Ensure **idempotency**: if same `inputsHash + policyHash` already exists for that vuln, you can either:

|

||||

|

||||

* return existing receipt, or

|

||||

* create a new one but mark it as a duplicate-of; choose one rule and document it.

|

||||

|

||||

---

|

||||

|

||||

### 2.3 APIs

|

||||

|

||||

Design REST/GraphQL endpoints (adapt names to your style):

|

||||

|

||||

**Read:**

|

||||

|

||||

* `GET /vulnerabilities/{id}/cvss-receipt`

|

||||

|

||||

* Returns full receipt with nested metrics, evidence, policy metadata, history.

|

||||

* `GET /vulnerabilities/{id}/cvss-receipts`

|

||||

|

||||

* List historical receipts/versions.

|

||||

|

||||

**Create / Update:**

|

||||

|

||||

* `POST /vulnerabilities/{id}/cvss-receipt`

|

||||

|

||||

* Body: CVSS input payload (not raw metrics) + policyId.

|

||||

* Backend applies policy → metrics, computes scores, stores receipt.

|

||||

* `POST /vulnerabilities/{id}/cvss-receipt/recalculate`

|

||||

|

||||

* Optional: allows updating **only Threat + Environmental** while preserving Base.

|

||||

|

||||

**Evidence:**

|

||||

|

||||

* `POST /cvss-receipts/{receiptId}/evidence`

|

||||

|

||||

* Upload/link evidence artifacts, compute sha256, associate with receipt.

|

||||

* (Or integrate with your existing evidence/attachments service and only store references.)

|

||||

|

||||

**Policy:**

|

||||

|

||||

* `GET /cvss-policies`

|

||||

* `GET /cvss-policies/{id}`

|

||||

|

||||

**History:**

|

||||

|

||||

* `GET /cvss-receipts/{receiptId}/history`

|

||||

|

||||

Add auth/authorization:

|

||||

|

||||

* Only certain roles can **change Base**.

|

||||

* Different roles can **change Threat/Env**.

|

||||

* Audit logs for each change.

|

||||

|

||||

---

|

||||

|

||||

### 2.4 Integration with Existing Pipelines

|

||||

|

||||

**Automatic creation paths:**

|

||||

|

||||

1. **Scanner import path**

|

||||

|

||||

* When new vulnerability is imported with vendor CVSS v4:

|

||||

|

||||

* Parse vendor vector → BaseMetrics.

|

||||

* Use your default policy to set Threat/Env to “NotDefined”.

|

||||

* Generate initial receipt (tag as `source = "vendor"`).

|

||||

|

||||

2. **Manual analyst scoring**

|

||||

|

||||

* Analyst opens Vuln in Stella Ops UI.

|

||||

* Fills out guided form.

|

||||

* Frontend calls `POST /vulnerabilities/{id}/cvss-receipt`.

|

||||

|

||||

3. **Customer-specific Environmental scoring**

|

||||

|

||||

* Per-tenant policy stored in `CvssPolicy`.

|

||||

* Receipts store that policyId; calculating environment-specific scores uses those controls/criticality.

|

||||

|

||||

---

|

||||

|

||||

## 3. Frontend / UI Implementation Plan

|

||||

|

||||

### 3.1 Main “CVSS Score Receipt” Panel

|

||||

|

||||

Single screen/card with sections (tabs or accordions):

|

||||

|

||||

1. **Header**

|

||||

|

||||

* Large score badge: `finalScore` (e.g. 9.1).

|

||||

* Label: `CVSS v4.0 (CVSS‑BTE)`.

|

||||

* Color-coded severity (Low/Med/High/Critical).

|

||||

* Copy-to-clipboard for vector string.

|

||||

* Show Base/Threat/Env sub-scores if you choose to expose.

|

||||

|

||||

2. **Base Metrics Section**

|

||||

|

||||

* Table or form-like display:

|

||||

|

||||

* Each metric: value, short textual description, collapsed justification with “View more”.

|

||||

* Example row:

|

||||

|

||||

* **Attack Vector (AV)**: Network

|

||||

|

||||

* “The vulnerability is exploitable over the internet. PoC requires only TCP connectivity to port 443.”

|

||||

* Evidence chips: `exploit_poc.md`, `nginx_error.log.gz`.

|

||||

|

||||

3. **Threat Metrics Section**

|

||||

|

||||

* Radio/select controls for Exploit Maturity, Automatable, Urgency.

|

||||

* “Intel references” list (URLs or evidence items).

|

||||

* If the user edits these and clicks **Save**, frontend:

|

||||

|

||||

* Builds Threat input payload.

|

||||

* Calls `POST /vulnerabilities/{id}/cvss-receipt/recalculate` with updated threat/env only.

|

||||

* Shows new score & appends a `ReceiptHistoryEntry`.

|

||||

|

||||

4. **Environmental Section**

|

||||

|

||||

* Controls selection: Present / Partial / None.

|

||||

* Business criticality picker.

|

||||

* Contextual notes.

|

||||

* Same recalc flow as Threat.

|

||||

|

||||

5. **Supplemental Section**

|

||||

|

||||

* Non-scoring fields with clear label: “Does not affect numeric score, for context only”.

|

||||

|

||||

6. **Evidence Section**

|

||||

|

||||

* List of evidence items with:

|

||||

|

||||

* Name, type, hash, link.

|

||||

* “Attach evidence” button → upload / select existing artifact.

|

||||

|

||||

7. **Policy & Determinism Section**

|

||||

|

||||

* Display:

|

||||

|

||||

* Policy ID + hash.

|

||||

* Scoring engine version.

|

||||

* Inputs hash.

|

||||

* DSSE status (valid / not verified).

|

||||

* Button: **“Download receipt (JSON)”** – uses the JSON schema you already drafted.

|

||||

* Optional: **“Open in external calculator”** with vector appended as query parameter.

|

||||

|

||||

8. **History Section**

|

||||

|

||||

* Timeline of changes:

|

||||

|

||||

* Date, who, what changed (e.g. `Threat.E: POC → Attacked`).

|

||||

* Reason + link.

|

||||

|

||||

### 3.2 UX Considerations

|

||||

|

||||

* **Guardrails:**

|

||||

|

||||

* Editing Base metrics: show “This should match vendor or research data. Changing Base will alter historical comparability.”

|

||||

* Display last updated time & user for each metrics block.

|

||||

* **Permissions:**

|

||||

|

||||

* Disable inputs if user does not have edit rights; still show receipts read-only.

|

||||

* **Error Handling:**

|

||||

|

||||

* Show vector parse or scoring errors clearly, with a reference to policy/engine version.

|

||||

* **Accessibility:**

|

||||

|

||||

* High contrast for severity badges and clear iconography.

|

||||

|

||||

---

|

||||

|

||||

## 4. JSON Schema & Contracts

|

||||

|

||||

You already have a draft JSON; turn it into a formal schema (OpenAPI / JSON Schema) so backend + frontend are in sync.

|

||||

|

||||

Example top-level shape (high-level, not full code):

|

||||

|

||||

```json

|

||||

{

|

||||

"vulnId": "CVE-YYYY-XXXX",

|

||||

"title": "Short vuln title",

|

||||

"cvss": {

|

||||

"version": "4.0",

|

||||

"enumeration": "CVSS-BTE",

|

||||

"vector": "CVSS:4.0/...",

|

||||

"finalScore": 9.1,

|

||||

"baseScore": 8.7,

|

||||

"threatScore": 9.0,

|

||||

"environmentalScore": 9.1,

|

||||

"base": {

|

||||

"AV": "N", "AC": "L", "AT": "N", "PR": "N", "UI": "P",

|

||||

"VC": "H", "VI": "H", "VA": "H",

|

||||

"SC": "L", "SI": "N", "SA": "N",

|

||||

"justifications": {

|

||||

"AV": { "reason": "reachable over internet", "evidence": ["ev1"] }

|

||||

}

|

||||

},

|

||||

"threat": { "E": "Attacked", "AU": "Yes", "U": "High" },

|

||||

"environmental": { "controls": { "CR": "Present", "XR": "Partial", "AR": "None" }, "criticality": "H" },

|

||||

"supplemental": { "safety": "High", "recovery": "Hard" }

|

||||

},

|

||||

"evidence": [

|

||||

{ "id": "ev1", "name": "exploit_poc.md", "uri": "...", "sha256": "..." }

|

||||

],

|

||||

"policy": {

|

||||

"id": "cvss-policy-v4.0-stellaops-20251125",

|

||||

"sha256": "...",

|

||||

"engine": "stellaops.scorer 1.2.0"

|

||||

},

|

||||

"repro": {

|

||||

"dsseEnvelope": "base64...",

|

||||

"inputsHash": "sha256:..."

|

||||

},

|

||||

"history": [

|

||||

{ "date": "2025-11-25", "change": "Threat.E POC→Attacked", "reason": "SOC report", "ref": "..." }

|

||||

]

|

||||

}

|

||||

```

|

||||

|

||||

Back-end team: publish this via OpenAPI and keep it versioned.

|

||||

|

||||

---

|

||||

|

||||

## 5. Security, Integrity & Compliance

|

||||

|

||||

**Tasks:**

|

||||

|

||||

1. **Evidence Integrity**

|

||||

|

||||

* Enforce sha256 on every evidence item.

|

||||

* Optionally re-hash blob in background and store `verifiedAt` timestamp.

|

||||

|

||||

2. **Immutability**

|

||||

|

||||

* Decide which parts of a receipt are immutable:

|

||||

|

||||

* Typically: Base metrics, evidence links, policy references.

|

||||

* Threat/Env may change by creating **new receipts** or new “versions” of the same receipt.

|

||||

* Consider:

|

||||

|

||||

* “Current receipt” pointer on Vulnerability.

|

||||

* All receipts are read-only after creation; changes create new receipt + history entry.

|

||||

|

||||

3. **Audit Logging**

|

||||

|

||||

* Log who changed what (especially Threat/Env).

|

||||

* Store reference to ticket / change request.

|

||||

|

||||

4. **Access Control**

|

||||

|

||||

* RBAC: e.g. `ROLE_SEC_ENGINEER` can set Base; `ROLE_CUSTOMER_ANALYST` can set Env; `ROLE_VIEWER` read-only.

|

||||

|

||||

---

|

||||

|

||||

## 6. Testing Strategy

|

||||

|

||||

**Unit Tests**

|

||||

|

||||

* `CvssV4EngineTests` – coverage of:

|

||||

|

||||

* Vector parsing/serialization.

|

||||

* Calculations for B, BT, BTE.

|

||||

* `ReceiptBuilderTests` – determinism:

|

||||

|

||||

* Same inputs + policy → same score + same hashes.

|

||||

* Different policyId → different policyHash, different DSSE, even if metrics identical.

|

||||

|

||||

**Integration Tests**

|

||||

|

||||

* End-to-end:

|

||||

|

||||

* Create vulnerability → create receipt with Base only → update Threat → update Env.

|

||||

* Vendor CVSS import path.

|

||||

* Permission tests:

|

||||

|

||||

* Ensure unauthorized edits are blocked.

|

||||

|

||||

**UI Tests**

|

||||

|

||||

* Snapshot tests for the card layout.

|

||||

* Behavior: changing Threat slider updates preview score.

|

||||

* Accessibility checks (ARIA, focus order).

|

||||

|

||||

---

|

||||

|

||||

## 7. Rollout Plan

|

||||

|

||||

1. **Phase 1 – Backend Foundations**

|

||||

|

||||